The Executive Summary

- The Problem: Most vector databases (Pinecone, Elasticsearch, OpenSearch) were engineered for Semantic Search (Write Once, Read Many). They are optimized for Search Bars, not Agent Loops. This fundamental mismatch is exactly why vector databases fail autonomous agents.

- The Shift: Autonomous Agents are Write Heavy systems. They constantly log thoughts, update state, and prune errors. This creates high frequency “Upsert Storms” that crash standard indexes.

- The Imperative: You must distinguish between Retrieval Databases (RAG) and State Databases (Agent Memory). Using the former for the latter is a guaranteed failure mode.

Introduction: The Search Bar Trap

Here is the most common architectural error I audit in 2026:

A team builds a sophisticated agent using LangChain or AutoGen. They hook it up to a standard vector database like Pinecone. It works perfectly in the demo.

Then they deploy it. The agent starts running 50 loops per minute. It tries to remember its last step by writing to the database.

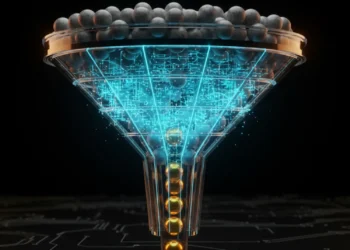

The system creates a bottleneck. Latency spikes from 20ms to 800ms. The bill explodes. The agent starts hallucinating because its Short Term Memory is stuck in an indexing queue. This is why we advocate for a tiered memory structure. To solve concurrency, you must implement a proper Vector Memory Architecture for Agentic AI.

Why? Because you treated an Agent like a Search Bar.

Table of Contents

The Failure Mode: Write Once vs. Write Always

The Villain in this story is Read Optimization.

Legacy vector databases are built on the assumption that data is static. You ingest a corporate PDF, index it which takes seconds, and then query it millions of times.

- Read to Write Ratio: 1,000,000 : 1

- Optimization: Perfect HNSW graphs, heavily cached.

Autonomous Agents invert this physics.

An agent thinking through a complex task writes to its memory every single step.

- Read to Write Ratio: 1 : 1

- The Crash: Standard HNSW indexes cannot handle real-time re-indexing at this velocity. They trigger what we call Index Rebuild Storms.

Architectural Definition:

Index Rebuild Storms occur when a vector database locks its index to insert new vectors faster than it can re-balance the graph, causing query latency to degrade exponentially during agent execution loops.

The Technical Analysis: 3 Mechanics of Failure

When you force a Read-Optimized DB to act as Agent Memory, three things break:

1. The Consistency Lag (The Ghost State)

Most cloud vector DBs are Eventually Consistent. When an agent writes: I have emailed the client, that vector enters a queue.

If the agent queries its memory 200ms later: Have I emailed the client?, the database returns NO.

Result: The agent sends the email again. And again.

Requirement: Agents need Strict Consistency (Read your writes), which most SaaS vector DBs do not guarantee at sub second speeds.

2. The Mutable Payload Problem

Agents need to update metadata.

- Step 1: Store memory

{"status": "planned"}. - Step 2: Update memory to

{"status": "executed"}.

Many vector DBs implement updates as Delete + Re Insert. This doubles the indexing load. Doing this 10,000 times an hour creates massive Tombstone overhead garbage data waiting to be collected, slowing down retrieval.

3. The Tax on Thought (Cost)

SaaS providers charge by Write Units.

- Search Bar: Writes happen once a month (New PDF). Cost = $0.

- Agent: Writes happen every 3 seconds. Cost = Exponential.I have seen startups burn $2,000/month just on logging agent thoughts to a managed vector service.

The Economics: The High Cost of Latency

This table compares a Read Optimized Legacy architecture against a Write Optimized Agentic architecture for a single active agent.

| Metric | “Search Bar” DB (e.g., Pinecone Standard) | “Agentic” DB (e.g., Qdrant/Weaviate) |

| Indexing Latency | Seconds (Eventually Consistent) | Milliseconds (Real-Time) |

| Write Cost | $10 – $50 per million writes | $0 (Self-Hosted Resource) |

| Update Mechanism | Full Re-Index (Slow) | In-Place Payload Update (Fast) |

| Loop Speed | 1 step per 3 seconds | 10 steps per second |

| Outcome | Agent stutters / Repeats tasks | Fluid, continuous autonomy |

The Architecture: What Actually Works?

To solve this, you must select a database engine that supports Real Time Indexing and Mutable Payloads.

The 2026 Standard:

- Qdrant: Written in Rust. Supports Binary Quantization (keeps indices small in RAM) and true real time updates. It handles high frequency writes without locking the entire graph.

- Weaviate: Excellent for Object Based memory where data structures change schema often.

- Redis (RediSearch): The fastest option for Working Memory (L1), though less capable for semantic search than Qdrant.

The Hybrid Strategy:

- Use Redis for the Agent’s Thought Loop (L1).

- Use Qdrant for the Agent’s Journal (L2).

- Never use a Serverless HTTP-only vector DB for the inner thought loop. The network latency alone (50ms) destroys the cognitive flow.

Conclusion: Select for Velocity

If you are building a search engine for your company wiki, use a Read Optimized database.

But if you are building a Sovereign AI Agent, you are building a high velocity transaction engine.

Most vector databases fail autonomous agents because they were built for Librarians, not Pilots.

Switch to a Write-Optimized architecture, or your agent will forever be stuck in the past.

Frequently Asked Questions (FAQ)

Q: Can’t I just batch my agent’s writes to save costs?

A: No. If you batch writes, the agent runs blind until the batch commits. An agent needs to know immediately what it just did to decide what to do next.

Q: Is PostgreSQL (pgvector) good enough for agents?

A: For low speed agents, yes. But pgvector uses IVFFlat or HNSW indexes that also suffer from write-heavy locking at scale. For high frequency agents, a dedicated Rust based engine (Qdrant) is superior.

Q: Why do you keep mentioning Sovereignty with databases?

A: Because if your agent’s memory lives on a SaaS cloud that throttles your write speeds during peak hours, your Employee stops working. You cannot rely on rented infrastructure for core cognition.

From the Architect’s Desk RankSquire

I was brought in to fix a Customer Support Agent for a Fintech client.

The agent was double-refunding customers.

The Cause: It approved a refund, wrote to memory, then checked memory 100ms later. The vector hadn’t indexed yet. It saw No Refund, so it issued another one.

The Fix: We moved from a generic Serverless Vector DB to a self hosted Qdrant instance with Read Your Writes consistency.

Result: Zero duplicate refunds. Latency dropped by 600ms.

Join the Conversation

Is your agent repeating itself? Check your database’s Write Latency metrics. You might find your answer there.

Comments 1