| Feature | Generative AI | Agentic AI |

|---|---|---|

| Mode | ⟳ Reactive — responds to prompts | ▶ Autonomous — pursues goals independently |

| State | ✗ Stateless — context lost at session end | ✓ Stateful — context persists across sessions |

| Memory | Context window only (128K max). Starts from zero every session. | L1 Redis (sub-1ms) · L2 Qdrant 20ms p99 · L3 Pinecone (elastic) |

| Goal execution | ✗ None — human drives every step | ✓ Multi-step autonomous — human sets goal only |

| Tool integration | Prompt-engineered. Schema in context. No versioning. | Versioned Weaviate registry. Agent retrieves schema at call time. |

| Session continuity | ✗ None — every session restarts from zero | ✓ Full — episodic memory + goal state persistence |

| Self-correction | ✗ Not possible — no execution history | ✓ Yes — queries past sessions for recovery patterns |

| Failure mode | Visible — human catches wrong output before action | Silent — Hallucination Amplification, Retrieval Drift |

| Infrastructure | LLM API only. No persistent storage required. | LLM API + Redis + Qdrant + Pinecone + n8n on DigitalOcean |

| Cost at scale | ~$12,000/month at 200 sessions/day (context stuffing) | ~$443/month at 200 sessions/day (97% reduction) |

| Best for | Content creation · Drafting · Research · Single-interaction tasks | Autonomous workflows · Multi-session continuity · Enterprise automation |

| Deploy when | Human reviews every output. No session continuity needed. | System must act, remember, and improve without human prompting. |

Generative AI creates content when you ask. Agentic AI completes workflows while you work on something else. The correct question is not which is better — it is which is correct for your specific workload. Full decision framework in Section 6 →

WHAT IS AGENTIC AI? (Simple Explanation)

Agentic AI is an AI system that autonomously pursues goals by planning tasks, calling tools, and storing memory across sessions rather than simply generating content in response to a prompt. Where generative AI creates, agentic AI acts.

- → Memory persistence context survives across sessions without re-reading source documents

- → Autonomous goal execution the system completes multi-step workflows without human prompting at each step

- → Tool integration management the system retrieves and calls external tools without human selection

CANONICAL DEFINITION

The difference between agentic AI vs generative AI is the difference between a system that creates and a system that acts. Generative AI produces content text, images, code, audio in response to a human prompt, then stops. Agentic AI pursues goals autonomously across multiple steps, makes decisions, calls tools, manages its own memory, and executes workflows without waiting for a human to issue the next instruction.

In 2026, the distinction between agentic AI vs generative AI is no longer theoretical. It is an architecture decision that determines what your system is capable of, what infrastructure it requires, and what failure modes it will encounter in production. Organizations that conflate the two are deploying generative AI infrastructure for agentic AI workloads and discovering the mismatch in production when agents begin failing silently, losing context across sessions, and producing confident wrong outputs from stateless retrieval.

This post is the definitive decision guide for CTOs, systems architects, and senior engineers who need to understand not just what agentic AI vs generative AI means but what it means for the systems you are building right now.

⚡ TL;DR: QUICK SUMMARY

- → Agentic AI vs generative AI is a systems architecture distinction, not a marketing category. Generative AI creates content reactively. Agentic AI executes goals autonomously across multiple steps with tools, memory, and decision loops.

- → Generative AI is stateless by design, each prompt is independent and context is discarded after the response. Agentic AI is stateful by design it maintains context, memory, and goal state across sessions.

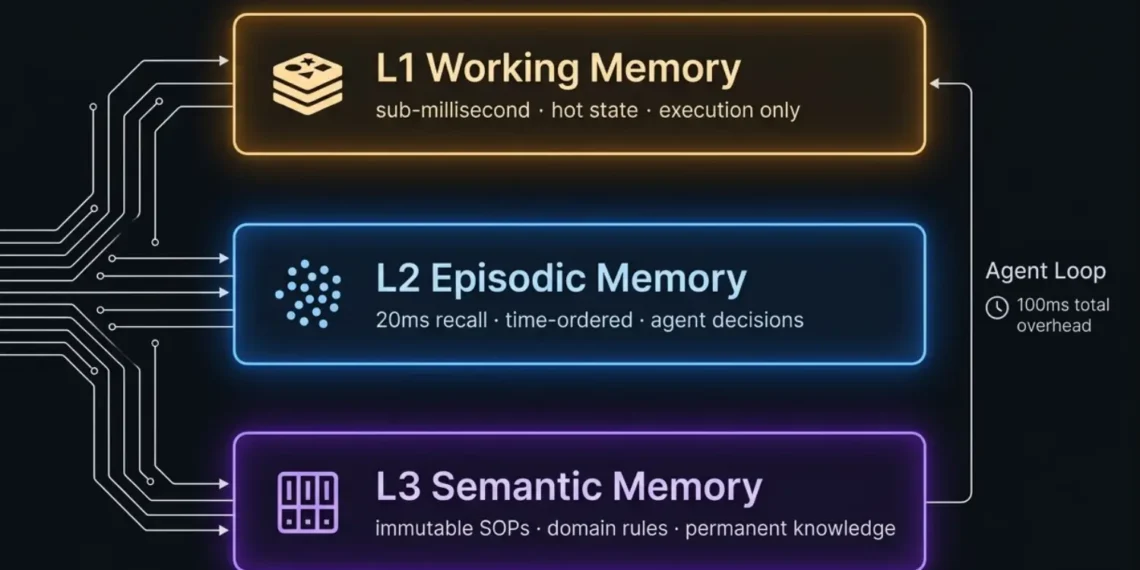

- → The infrastructure requirement for agentic AI is fundamentally different: it requires a memory architecture (L1 working state, L2 semantic store, L3 episodic log), an orchestration layer, and tool integration that generative AI deployments do not need.

- → Generative AI fails visibly, wrong content, incoherent output, factual errors. Agentic AI fails silently memory contamination, retrieval drift, context collapse, and confident wrong execution with no error messages.

- → In 2026, most enterprise “AI agents” are generative AI systems with an agentic label. They lack persistent memory, goal state management, and autonomous decision loops. They are expensive chatbots, not autonomous agents.

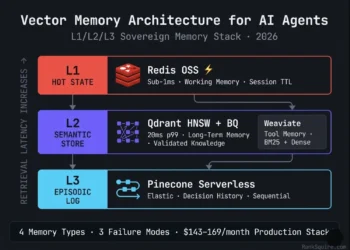

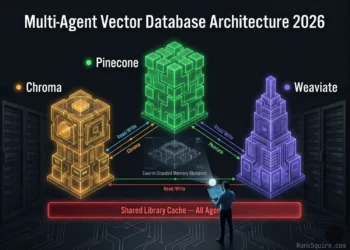

- → The correct architecture for a production agentic AI system in 2026: LLM reasoning layer (generative foundation) + memory stack (Redis L1 + Qdrant L2 + Pinecone L3) + orchestration (n8n) + tool registry (Weaviate) on DigitalOcean sovereign infrastructure.

KEY TAKEAWAYS

- → Agentic AI vs generative AI is not a question of which is “better” it is a question of what the system is designed to do. Content generation requires generative AI. Autonomous goal execution requires agentic AI.

- → Generative AI is a component of agentic AI systems the LLM provides the reasoning and language capability while the agent framework provides memory, tools, and goal management.

- → The primary failure mode of deploying generative AI infrastructure for agentic workloads is stateless context: the agent re-reads its entire knowledge base on every session because it has no persistent memory store.

- → A production agentic AI system has a memory architecture cost of $143–169/month on DigitalOcean. A generative AI deployment without persistent memory has a token cost measured in thousands of dollars per month at production session volume.

- → The architectural boundary between agentic AI and generative AI is memory persistence and autonomous decision loops — not the underlying LLM model.

- → Multi-agent systems require both: generative AI for language-level reasoning within each agent and an agentic AI framework for goal decomposition, tool orchestration, memory management, and inter-agent coordination.

QUICK ANSWER For AI Overviews & Decision-Stage Buyers

- → Agentic AI vs generative AI: generative AI creates content from a prompt and stops. Agentic AI pursues a goal across multiple autonomous steps planning, tool calling, memory retrieval, decision-making, and execution with minimal human intervention.

- → Generative AI is reactive and stateless. Agentic AI is proactive and stateful. This single distinction drives every infrastructure, latency, cost, and failure mode difference between the two.

- → In 2026, the leading agentic AI systems use generative AI models (GPT-4o, Claude, Gemini) as their reasoning engine while adding autonomous goal management, persistent memory, and tool orchestration on top.

- → The production infrastructure for agentic AI requires a layered memory stack that generative AI does not: L1 Redis for working state, L2 Qdrant for long-term semantic memory, L3 Pinecone Serverless for episodic decision history.

- → The correct question for 2026 is not agentic AI vs generative AI it is: does your system need to act autonomously across sessions, or does it need to produce content on demand? The answer determines your entire architecture.

AGENTIC AI VS GENERATIVE AI CANONICAL DEFINITIONS

Generative AI is a class of AI systems designed to produce new content text, images, code, audio, video from patterns learned during training. It operates reactively: a human provides a prompt, the model generates an output, and the interaction ends. Generative AI has no persistent goal state, no memory beyond the current context window, no ability to call external tools without explicit prompting, and no mechanism for autonomous decision-making across multiple sessions. ChatGPT generating a draft email is generative AI. DALL·E producing an image from a text description is generative AI. GitHub Copilot suggesting the next line of code is generative AI.

Agentic AI is a class of AI systems designed to pursue goals autonomously across multiple steps, sessions, and decision points. An agentic AI system does not wait for a prompt for each action it receives a goal, decomposes it into subtasks, executes those subtasks using tools and APIs, retrieves relevant context from persistent memory, evaluates outcomes, and adjusts its approach based on what worked and what did not. An AI agent that monitors a client’s contract database, identifies renewal risk, drafts communications, schedules follow-ups, and logs its actions without human intervention at each step is agentic AI.

Agentic AI systems use generative AI models as their reasoning and language capability layer. The LLM is the brain. The agentic framework memory architecture, tool registry, goal state management, orchestration is the body. You cannot build a production agentic AI system without a generative foundation, but a generative model alone is not an agent.

EXECUTIVE SUMMARY: WHY THIS DISTINCTION MATTERS IN 2026

The most expensive mistake in enterprise AI in 2026 is deploying generative AI infrastructure for agentic AI workloads. It looks correct from the outside the system uses an LLM, it processes natural language, it produces coherent responses. But internally it is operating without the architectural requirements that agentic workloads demand: persistent memory, goal state management, autonomous decision loops, and tool integration that survives across sessions.

The failure mode is not obvious. The system does not crash. It produces output. But the output degrades over time each session starts from zero, the agent cannot learn from its own history, it re-reads the same documents on every execution, its token costs scale linearly with session volume, and its outputs become inconsistent as the context window fills with retrieval noise rather than relevant memory.

This is not a model problem. A more powerful LLM does not fix it. It is an architecture problem. And the architecture distinction maps directly onto the agentic AI vs generative AI boundary.

THE 2026 STATE OF DEPLOYMENT

As of March 2026, the majority of enterprise “AI agent” deployments are generative AI systems with an agentic interface.

They accept natural language task descriptions. They produce multi-step output. They are marketed as autonomous. But they lack:

- → Persistent memory across sessions: context window empties on every new session

- → Goal state management: no mechanism for tracking what has been completed vs. pending

- → Tool registry with versioned schemas: function calls are prompt-engineered, not architecturally managed

- → Episodic decision history: no record of past executions for self-correction

- → Autonomous decision loops: human approval required at each significant step

THE ARCHITECTURE BOUNDARY

The boundary between agentic AI and generative AI in 2026 is defined by three architectural properties:

Table of Contents

1. THE CORE DISTINCTION: REACTIVE VS AUTONOMOUS

The difference between agentic AI vs generative AI is not which is more powerful — it is where human intervention sits in the execution loop. Generative AI requires a human at every step. Agentic AI requires a human at goal definition and exception escalation only. Everything between is architecture. Verified March 2026.

The single most important concept in agentic AI vs generative AI is the reactive / autonomous boundary.

Generative AI is reactive. It waits for a human prompt. It generates a response. It stops. The human decides what to do with the response, what the next prompt should be, and whether to take any action based on the output. The model has no knowledge of what happened in previous sessions unless that history is explicitly included in the current prompt. It has no persistent goal. It has no memory of previous interactions unless you re-supply it. Every conversation starts from zero.

Agentic AI is autonomous. It receives a goal not a prompt and pursues that goal across as many steps as required. It queries its own memory at session start to understand what it has already done in this task domain. It selects tools appropriate to the current subtask. It evaluates the output of each tool call and decides what to do next. It logs its decisions and outcomes to episodic memory so future sessions can build on current progress. It escalates to a human only when it encounters conditions it cannot resolve with its current toolset.

THE INFERENCE LOOP DIFFERENCE

This reactive / autonomous boundary produces a fundamental difference in how these systems run:

The practical consequence: a generative AI response takes 1–5 seconds. An agentic AI task execution takes 30 seconds to several minutes not because the LLM is slower, but because the agent is running multiple inference passes, tool calls, memory retrievals, and decision evaluations within a single goal cycle.

This distinction drives every infrastructure requirement difference in the agentic AI vs generative AI comparison.

2. FIVE ARCHITECTURAL DIFFERENCES BETWEEN AGENTIC AI AND GENERATIVE AI

The most consequential difference in the agentic AI vs generative AI comparison is not memory or tools — it is failure detection. Generative AI fails visibly. A human catches wrong content before it causes harm. Agentic AI fails silently. An agent with Retrieval Drift or Hallucination Amplification continues executing, calling APIs, updating systems, and producing outputs — all wrong, all with apparent confidence, all undetected by standard monitoring. This asymmetry is why production agentic AI requires infrastructure-level monitoring that generative AI deployments do not. Verified March 2026.

3. HOW GENERATIVE AI BECOMES A COMPONENT OF AGENTIC AI

THE COMPOSITION PRINCIPLE

The relationship between agentic AI vs generative AI is not a competition. It is a composition. In every production agentic AI system in 2026, a generative AI model serves as the LLM reasoning layer the component that:

- → Interprets natural language goal descriptions

- → Decomposes goals into subtask sequences

- → Generates the reasoning chain for each decision

- → Produces natural language outputs for tool inputs and user communications

- → Evaluates tool call results and determines next actions

- → Summarizes completed work for episodic memory logging

Without a generative AI foundation, an agentic AI system has no language understanding, no reasoning capability, and no flexibility to handle the natural language variability of real-world goals and data. The LLM is what makes agents intelligent rather than rule-based.

THE COMPOSITION IN PRODUCTION

A production agentic AI system in 2026 looks like this:

GPT-4o or Claude as the reasoning engine. Receives a goal, retrieves relevant context from memory, generates the next action decision, produces natural language for tool inputs and user-facing outputs.

n8n workflows manage the multi-step execution loop. Tool calls are routed to the appropriate API. Results are evaluated. The next step is determined based on goal state. Memory is updated after each significant action.

L1 Redis: current goal state and session working variables. L2 Qdrant: validated domain knowledge the agent retrieves for context. L3 Pinecone: episodic history of previous executions in this task domain. The agent retrieves relevant memory at session start and updates memory after completion.

Weaviate hybrid search maintains versioned function schemas for all APIs the agent can call. The agent retrieves the current schema for any function before calling it not from the prompt, from the registry.

4. THE PRODUCTION INFRASTRUCTURE GAP

The infrastructure gap between agentic AI and generative AI is the most practically important aspect of the agentic AI vs generative AI comparison for engineering teams.

INFRASTRUCTURE REQUIREMENTS

- → LLM API access (OpenAI, Anthropic, Google) — $0.01–0.15 per 1K tokens

- → A context window large enough to hold the current task content

- → No persistent storage required

- → No memory architecture required

- → No tool registry required

- → No orchestration layer required beyond basic API calls

- → LLM API access — same as above

- → L1 Redis OSS co-located — sub-1ms working memory, zero software cost on DigitalOcean

- → L2 Qdrant HNSW + Binary Quantization — 20ms p99 at 10M vectors, $96/month on 16GB Droplet

- → L3 Pinecone Serverless — elastic episodic log, $10–30/month at single-agent volume

- → Weaviate Cloud Starter (optional) — tool memory hybrid search, $25/month

- → n8n self-hosted — orchestration, zero software cost co-located

- → DigitalOcean Block Storage 100GB — persistent vector index storage, $10/month

The infrastructure cost of agentic AI is not high — $143–169/month is modest compared to the token cost of the equivalent generative AI system operating without persistent memory. But the infrastructure complexity is categorically higher. Generative AI requires API access. Agentic AI requires API access plus memory architecture plus orchestration plus tool management plus monitoring.

THE TOKEN COST COMPARISON

The token cost argument for agentic AI over stateless generative AI is compelling at production volume.

Consider an agent processing 200 client sessions per day, each requiring access to a 500-page domain knowledge base.

Total: $12,000/month

Total: $443–469/month

5. FAILURE MODES: GENERATIVE AI VS AGENTIC AI IN PRODUCTION

GENERATIVE AI FAILURE MODES

The LLM generates plausible but factually incorrect content from its training distribution. Visible to the human in the loop. Correctable by re-prompting or providing correct source material in context.

At very long documents or conversation histories, the model truncates the oldest content. Information from the beginning of the conversation may be lost. Detectable and manageable.

Slight variations in prompt wording produce significantly different outputs. Manageable through prompt engineering and evaluation.

The model has no knowledge of events after its training cutoff. Addressable with RAG retrieval of current documents.

AGENTIC AI FAILURE MODES

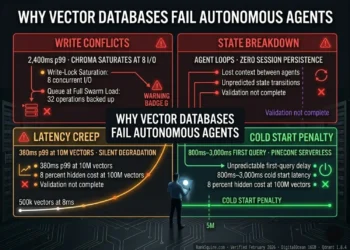

The agent retrieves incorrect or unvalidated memory records and reasons correctly from wrong premises. The output is internally consistent but factually wrong. No error messages are generated. The human does not detect the failure until downstream consequences appear. This is categorically different from model hallucination the model has not failed. The memory architecture has failed.

The embedding model used to store memory records is different from the one used to query them. This occurs when a model is upgraded without re-indexing. The query vector and stored vectors exist in geometrically incompatible dimensional spaces. Cosine similarity calculations return valid scores for wrong content. The agent receives and reasons from wrong retrievals silently, with confidence.

Memory accumulates without TTL, pruning, or deduplication. The retrieval pool fills with noise. Top-K retrieval begins returning a mix of relevant and stale records. The agent reasons from both simultaneously. Output quality degrades proportionally to the noise ratio invisibly, without error messages.

A function schema is updated without incrementing the version in the tool registry. The agent retrieves the outdated schema, calls the function with wrong parameters, and receives a 400 API error. It has no basis for understanding why it retrieved what it believed was the current specification. The root cause is architecture, not model behavior.

The agent’s in-progress goal state is lost due to a session interruption without persistence to L1 Redis or L3 episodic memory. On next session initiation, the agent has no record of what it has already completed. It may re-execute completed steps, duplicate actions, or take actions that are only correct given the previous session’s context.

THE FAILURE MODE ASYMMETRY

The fundamental asymmetry in agentic AI vs generative AI failure modes is detection. Generative AI fails visibly. Agentic AI fails silently.

This asymmetry means that operating an agentic AI system in production without infrastructure-level monitoring recall quality evaluation, memory contamination detection, tool schema versioning, goal state persistence is not merely suboptimal.

6. AGENTIC AI VS GENERATIVE AI: DECISION FRAMEWORK FOR ARCHITECTS

- Task is content creation — text, images, code, media

- Each interaction is self-contained — no session continuity needed

- Human reviews every output before any action is taken

- Domain knowledge fits in the LLM context window

- No tool calling needed beyond basic API integration

- System does not need to learn from its own execution history

- Latency requirement: sub-5 seconds per complete response

- Task requires autonomous multi-step workflow execution

- Sessions need continuity — system must remember previous work

- System must call tools autonomously without human tool selection

- Domain knowledge base exceeds context window limits

- System must self-correct from its own execution history

- Autonomous decisions required between human checkpoints

- Token costs exceed $1,000/month at current session volume

- You need both content creation and autonomous execution

- Multiple specialized agents must collaborate on shared goals

- External users interact via natural language (conversation layer)

- Backend workflows require autonomy while front-end requires conversation

- Some tasks are reactive (drafting) and some are autonomous (execution)

- Scale requires multiple agents operating in parallel with shared memory

- This is the production architecture for 2026 enterprise AI

7. INDUSTRY USE CASES: WHEN TO DEPLOY EACH

LEGAL TECHNOLOGY

Contract drafting assistant a lawyer provides a brief and the system generates a contract draft for review. The human reviews every output. No session continuity required. No autonomous action.

Contract risk monitoring system the agent monitors a portfolio of active contracts, identifies renewal risk based on clause analysis, drafts outreach communications, schedules calendar events, updates the CRM, and logs its actions to episodic memory for the next session. No human intervention required between monitoring cycle and CRM update. Requires persistent memory, tool integration, and autonomous decision-making.

HEALTHCARE AI

Clinical note summarization a physician provides a consultation transcript and the system generates a structured clinical note. Human physician reviews before it enters the medical record.

Patient monitoring agent the agent reviews incoming lab results against patient history stored in long-term semantic memory, identifies values outside normal range for this specific patient’s baseline, drafts a physician alert, schedules a follow-up protocol, and logs the decision to episodic memory with outcome_status for self-correction on future similar cases. Autonomous multi-step execution with memory persistence.

E-COMMERCE AND OPERATIONS

Product description generator provide a product SKU and specifications, receive a marketing description. Self-contained interaction.

Inventory management agent monitors inventory levels, identifies when stock falls below reorder threshold, places supplier orders autonomously, updates inventory systems, alerts logistics team, and logs fulfillment sequences to episodic memory. The agent learns over time which suppliers produce the fastest fulfillment outcomes and adjusts its reorder routing accordingly. Autonomous, multi-session, memory-dependent.

B2B SALES INTELLIGENCE

Cold outreach email generator provide prospect information and value proposition, receive a personalized email draft. Human sends the email.

Sales intelligence agent monitors prospect company signals (funding rounds, hiring patterns, executive changes), retrieves relevant case studies from long-term semantic memory, drafts personalized outreach, schedules follow-up sequences, updates CRM, and logs conversion outcomes to episodic memory so future outreach to similar prospect profiles improves. Full workflow autonomy from signal detection to CRM update.

8. THE HYBRID ARCHITECTURE: GENERATIVE AI INSIDE AGENTIC AI

The production architecture question in 2026 is not agentic AI vs generative AI it is how to compose generative AI correctly inside an agentic AI system. The following is the production-verified composition for a single autonomous agent.

THE FOUR-LAYER COMPOSITION

Role in the agentic system: Goal interpretation, subtask decomposition, decision reasoning, tool input generation, natural language output, episodic summary generation

What it does NOT do: Make decisions about which memory to retrieve (the orchestration layer handles this), call tools directly (the tool registry and orchestration layer handle this), persist context between sessions (the memory architecture handles this)

Role: Multi-step execution loop management, memory read/write routing, tool call dispatch, validation gate enforcement, recall quality monitoring, re-indexing scheduling, GDPR deletion workflows

L1: Redis OSS working state, goal state variables, tool schema hot cache sub-1ms

L2: Qdrant HNSW + BQ validated domain knowledge, consolidated episodic patterns — 20ms p99

L3: Pinecone Serverless episodic decision log, sequential session history elastic p99

Tool Memory: Weaviate hybrid BM25+dense versioned function schema registry 44ms p99

Role: Maintains current and deprecated versions of all function schemas the agent can call. Agent retrieves current schema before every tool call. Schema updates never break running sessions.

9. COST COMPARISON: GENERATIVE AI VS AGENTIC AI IN PRODUCTION

| Cost Component | Generative AI (Stateless) | Agentic AI (Sovereign Stack) | Difference |

|---|---|---|---|

| LLM API tokens (input) | ~$12,000/month | ~$300/month | 97% reduction |

| L1 Redis — working memory | $0 | $0 (co-located Docker) | — |

| L2 Qdrant — semantic memory | $0 | $0 software (DO Droplet cost below) | — |

| L3 Pinecone Serverless — episodic | $0 | $10–30/month | New infra cost |

| Weaviate — tool memory (optional) | $0 | $25/month | New infra cost |

| DigitalOcean 16GB Droplet | $0 (API-only) | $96/month | New infra cost |

| Block Storage 100GB | $0 | $10/month | New infra cost |

| Embedding (text-embedding-3-small) | Included in token cost above | ~$2–8/month | — |

| Engineering overhead (session mgmt) | High — manual per session | Low — automated lifecycle | Operational improvement |

| TOTAL MONTHLY | ~$12,000/month | ~$443–469/month | 96% reduction |

| MONTHLY SAVINGS | ~$11,531–11,557/month | ~$138,000–138,684/year | |

The $143–169/month infrastructure investment for the Sovereign Agentic AI Stack pays for itself before the second day of production operation at 200 sessions/day compared to stateless generative AI context stuffing. At that volume, the daily token cost of stateless generative AI is $400. The daily infrastructure cost of agentic AI is $4.77–5.60. The infrastructure cost is less than 1.5% of the token cost it replaces. The token cost of context stuffing is linear with session volume. The infrastructure cost of agentic AI is fixed. The financial argument for agentic AI architecture is not theoretical at production scale — it is the primary engineering budget conversation.

10. PRODUCTION HARDENING FOR AGENTIC AI SYSTEMS

Agentic AI systems require production hardening that generative AI deployments do not. The following three protocols apply specifically to the agentic infrastructure layer not to the LLM model.

AGENTIC INFRASTRUCTURE HARDENING PROTOCOLS

No agent-generated content enters long-term memory without passing a validation gate. All agent outputs go to a staging Qdrant collection first. An n8n Reviewer workflow evaluates each staging record against three criteria: source validation, cosine deduplication above 0.92 threshold, and mandatory metadata tagging. Only approved records are promoted to long-term memory.

Lock the embedding model across all memory layers via a single n8n credential node. Any embedding model change triggers an immediate full collection re-indexing job running in parallel with a zero-downtime alias swap before the new model is promoted to production queries. Never run mixed embedding model versions against the same collection.

Implement scheduled recall quality evaluation against a ground truth set of 200+ query-document pairs from the agent’s specific domain. Run the evaluation job on a daily cron schedule. Alert when recall quality falls below the domain-appropriate threshold: 90% for compliance-adjacent deployments, 85% for general enterprise, 75% for exploratory agents.

11. CONCLUSION: THE ARCHITECTURE DECISION THAT DEFINES YOUR AI SYSTEM

The agentic AI vs generative AI distinction is not a marketing question. It is the architecture decision that determines what your system can do, what it will cost, how it will fail, and what it requires to operate reliably in production.

Generative AI is a solved deployment. API access, a well-engineered prompt, a human in the loop. Powerful for content creation, research assistance, and reactive task support. Fails visibly, recovers quickly, costs predictably at low volume.

Agentic AI is an architecture challenge. Memory persistence, goal state management, tool integration, autonomous decision loops, production monitoring, lifecycle management. Powerful for autonomous workflow execution, multi-session continuity, and production-scale automation. Fails silently, degrades invisibly, and scales cost-efficiently once the infrastructure is correctly built.

In 2026, the enterprises winning with AI are not the ones with the most powerful LLM. They are the ones who correctly identified which of their workflows require content generation and which require autonomous execution and built the right architecture for each.

Generative AI creates content. Agentic AI creates outcomes.

The distinction between agentic AI vs generative AI is the distinction between a tool that helps people produce and a system that produces on behalf of people. Both have a place in the 2026 enterprise AI architecture. The error is deploying the wrong one for the job and paying the token cost, the operational degradation, and the engineering debt of discovering the mismatch in production.

Build the architecture for what your system needs to do. Not for what is easiest to deploy.

ARCHITECTURAL RESOURCES & DEEP DIVES

The 6 Tools That Bridge Generative AI and Agentic AI in Production

Every tool below is production-verified for the hybrid agentic AI architecture — generative AI reasoning on top, agentic infrastructure below. Role in the agentic AI vs generative AI system boundary specified for each tool.

st_{agent_id}_{session_id}_{variable_name}. Without session isolation in the key namespace, concurrent agent sessions overwrite each other’s working state. An agent running 10 concurrent sessions with a flat key space is running one session’s reasoning across 10 sessions simultaneously the most common concurrent-agent failure mode. Verified March 2026./var/lib/qdrant before the first vector is written. Qdrant data on a Droplet’s local SSD is deleted when the Droplet is deleted. Block Storage persists independently. A production agentic AI memory system without Block Storage has no disaster recovery path. At $10/month, this is the lowest-cost insurance in the architecture. Verified March 2026.| Tool | System Boundary | Replaces in Generative AI | Deploy When |

|---|---|---|---|

| GPT-4o / Claude | Generative AI layer | Nothing always required | Always the reasoning foundation |

| n8n (self-hosted) | Gen → Agentic boundary | Manual human task management | First agentic component to deploy |

| Qdrant (L2) | Agentic memory layer | Context window stuffing (saves 97% tokens) | Agent reads same docs repeatedly |

| Redis (L1) | Agentic memory layer | Re-deriving goal state from context | Concurrent loops running |

| Pinecone (L3) | Agentic memory layer | No session history whatsoever | Multi-session continuity required |

| DigitalOcean | Agentic infrastructure | API-only (no infrastructure needed) | Always all agentic layers co-located here |

Start with the LLM (GPT-4o or Claude) already in place — you have generative AI. Add n8n orchestration you have crossed the agentic boundary. Add L2 Qdrant — you have eliminated 97% of context window token costs and gained persistent domain knowledge. Add L1 Redis you have goal state management and concurrent session safety. Add L3 Pinecone Serverless you have multi-session continuity and self-correction. All on DigitalOcean 16GB with Block Storage. Total migration time: one engineer, one day. Total monthly cost: $443–469 versus the $12,000/month stateless alternative. The agentic AI vs generative AI transition is not a platform change it is an architecture layer added on top of the LLM you already use.

You Have Generative AI. You Need Agentic AI. Here Is the Architecture.

No theory. No templates. The complete four-layer agentic AI architecture built on top of the LLM you already use — and deployed on infrastructure you own.

- Agentic AI vs generative AI boundary identified in your current system

- L1/L2/L3 memory stack designed for your specific agent’s domain and loop pattern

- n8n orchestration layer — goal state management, memory routing, tool dispatch

- Qdrant L2 with validation gate — eliminating context stuffing token costs by 97%

- Pinecone L3 episodic schema with outcome metadata for self-correction

- Redis L1 session isolation — concurrent loop safety from day one

- Migration path from your current generative AI deployment — zero downtime

$12,000/Month in Tokens. $443/Month With Agentic Memory. Same Outputs.

The infrastructure investment that separates agentic AI from generative AI is $143–169/month. At 200 sessions/day it saves $11,550/month versus stateless context stuffing. The architecture review identifies exactly where your current system crosses the stateless boundary — and what it takes to cross back the right way.

AUDIT MY AI ARCHITECTURE →12. FAQ: AGENTIC AI VS GENERATIVE AI 2026

Q1: What is the main difference between agentic AI and generative AI?

The main difference between agentic AI vs generative AI is autonomy and statefulness. Generative AI creates content in response to a prompt and stops it is reactive and stateless. Agentic AI pursues goals across multiple autonomous steps it is proactive and stateful, maintaining persistent memory, goal state, and tool integration across sessions. In 2026, agentic AI systems use generative AI models as their reasoning engine while the agent framework provides the autonomous goal management, memory persistence, and tool orchestration that transforms a language model into a production autonomous system.

Q2: Is agentic AI better than generative AI?

Agentic AI is not better than generative AI it is designed for a different task category. Generative AI is superior for content creation: producing text, images, code, and media at speed with human review. Agentic AI is superior for autonomous workflow execution: completing multi-step tasks without human intervention at each step. Deploying agentic AI for content generation is architectural overkill. Deploying generative AI for autonomous workflow execution produces a system that fails at scale. The correct question is not which is better it is which is correct for the specific workload.

Q3: Can agentic AI work without generative AI?

No. Every production agentic AI system in 2026 uses a generative AI model typically GPT-4o or Claude as its reasoning layer. The LLM provides the natural language understanding, goal interpretation, subtask decomposition, and decision reasoning that makes the agent intelligent rather than rule-based. Without a generative foundation, an agentic AI system would be limited to fixed workflows and rule-based logic with no ability to handle the natural language variability of real-world goals and data. Generative AI is a component of agentic AI it provides the reasoning capability while the agent framework provides the autonomy.

Q4: What does “stateless” mean for generative AI and why does it matter?

Stateless means that generative AI has no memory between sessions. Each conversation or query begins with no knowledge of previous interactions unless that history is explicitly re-supplied in the current prompt. This matters at production scale because stateless systems must re-read all relevant source material on every session producing high token costs, slow retrieval, and inconsistent outputs that do not improve with operational experience. Agentic AI is stateful by design: it maintains working state in Redis, long-term knowledge in Qdrant, and decision history in Pinecone, reducing token consumption by up to 97% compared to equivalent stateless generative AI deployments.

Q5: What infrastructure does agentic AI require that generative AI does not?

Agentic AI requires a layered memory architecture that generative AI does not need: L1 Redis for sub-millisecond working state access, L2 Qdrant HNSW with Binary Quantization for semantic memory retrieval at 20ms p99, L3 Pinecone Serverless for episodic decision history, and Weaviate for versioned tool memory hybrid search. Additionally: n8n for orchestration of multi-step execution loops, validation gates for memory quality control, recall quality monitoring for retrieval precision measurement, and scheduled re-indexing for embedding model consistency. Total infrastructure cost for a full single-agent production stack: $143–169/month on DigitalOcean.

Q6: What is Hallucination Amplification and how is it different from generative AI hallucination?

Generative AI hallucination is when the LLM generates plausible but factually incorrect content from its training distribution. Hallucination Amplification is an agentic AI failure mode where the agent’s memory architecture retrieves incorrect or unvalidated records, and the LLM reasons correctly from those wrong premises producing confident, internally consistent, but factually wrong outputs. The model has not hallucinated. The memory has failed. This distinction matters because the fix is different: generative AI hallucination is addressed by better prompting, RAG retrieval, or model selection. Hallucination Amplification is addressed by validation gates on long-term memory writes an architectural fix, not a model fix.

Q7: When should I use generative AI inside an agentic AI system?

Always. The question is not whether to use generative AI inside an agentic AI system every production agentic AI system does but which LLM to use and for which tasks within the agent’s execution loop. Use generative AI for: goal interpretation at session start, subtask description generation, decision reasoning at each loop iteration, natural language inputs to tool calls, user-facing communication outputs, and episodic memory summarization after session completion. Use the agentic AI framework for: memory routing, tool dispatch, goal state management, validation gate enforcement, and recall quality monitoring. The generative AI model should never be responsible for memory management or tool versioning those are architectural concerns, not language tasks.

Q8: What is the token cost difference between agentic AI and stateless generative AI at production volume?

At 200 sessions per day with 100 pages of domain knowledge per session, stateless generative AI costs approximately $12,000/month in input token costs alone (100 pages × 2K tokens × 200 sessions × $0.01 per 1K tokens = $400/day). Agentic AI with L2 Qdrant semantic memory retrieves 5 relevant passages per session instead reducing token consumption to approximately $300/month. Infrastructure cost adds $143–169/month. Total agentic AI monthly cost: $443–469 versus $12,000. The infrastructure investment pays for itself before the second day of production operation.

Q9: What is the difference between agentic AI and RPA (Robotic Process Automation)?

RPA executes predefined, fixed workflows based on rules it automates repetitive, structured tasks that follow exactly the same path every time. Agentic AI executes flexible, goal-oriented workflows it can adapt its approach based on the current state, retrieved memory, tool call outcomes, and reasoning about what action best advances the current goal. RPA breaks when workflows change. Agentic AI adapts. RPA cannot handle ambiguity or variability in input data. Agentic AI uses an LLM to interpret and handle natural language variability. In 2026, agentic AI is increasingly replacing RPA in enterprise automation for any workflow that requires judgment, variability handling, or natural language interface.

Q10: What is the simplest way to understand agentic AI vs generative AI?

The simplest framing: generative AI creates content when you ask. Agentic AI completes workflows while you work on something else. If you ask a generative AI to write a follow-up email to a sales prospect, it writes the email and stops you paste it, you send it, you schedule the follow-up yourself. If you give the equivalent task to an agentic AI, it retrieves the prospect’s history from memory, drafts the email using an LLM, sends it via your email integration, schedules a follow-up in your calendar, updates your CRM, and logs the interaction to its episodic memory for future outreach optimization without further prompting. One creates. One acts.

12. FROM THE ARCHITECT’S DESK

The most common misdiagnosis I encounter in enterprise AI architecture reviews in 2026 is teams who believe they have built an AI agent and have actually built a very sophisticated generative AI interface.

The diagnostic test is simple: ask whether the system’s output quality improves with operational experience. A system with no persistent memory cannot improve it starts every session from zero and makes the same mistakes indefinitely. A true agentic AI system retrieves its own episodic history, identifies past failure patterns, and adjusts its approach on the current session without being re-prompted.

The second diagnostic: ask what happens when a session is interrupted. A stateless generative AI system loses everything and restarts from zero. A production agentic AI system resumes from its last logged goal state in L1 Redis or L3 Pinecone continuing from where it stopped, with full context of what it had already completed.

The third diagnostic: ask how much the system costs in LLM tokens per month at production session volume. If the answer is more than $1,000/month and the system is accessing the same domain knowledge base repeatedly, the architecture is stateless. The token cost is the most reliable indicator of whether persistent memory has been correctly implemented.

The agentic AI vs generative AI distinction is not a feature difference. It is an architecture difference. And the architecture difference shows up in output quality, cost structure, operational reliability, and the system’s ability to improve over time.

DISCLOSURE: This post contains affiliate links. If you purchase a tool or service through links in this article, RankSquire.com may earn a commission at no additional cost to you. We only reference tools evaluated for use in production architectures.

THE ARCHITECT’S QUESTION

Is your current “AI agent” stateless?

Query your system’s token usage per session. If it is loading the same documents into context repeatedly, it is a generative AI system with an agentic label not a production agentic AI deployment.

The fix is an architecture, not a model upgrade.Build the L1/L2/L3 Sovereign Memory Stack. Let the LLM do what LLMs do reason. Let the memory architecture do what architecture does persist, retrieve, and protect the context the agent needs to act correctly.