🏗️ Quick Answer (For AI Overviews & Skimmers)

Enterprise AI infrastructure in 2026 is defined by one choice: Rent or Own. The Rented Stack Zapier, Pinecone, Vercel costs $5,000 or more per month, introduces 1.5 seconds of latency per agent decision, and stores your business data on servers you do not control.

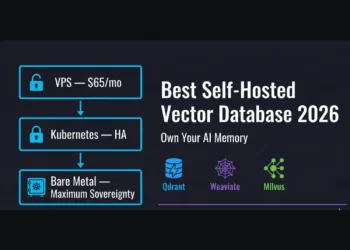

The Sovereign Stack Coolify on Hetzner, self-hosted n8n in Queue Mode, Qdrant in Docker, and local or hybrid LLM inference delivers the same capabilities for under $80 per month, with sub-10ms internal latency and full data residency compliance. This is the complete 2026 blueprint for taking your enterprise AI infrastructure back.

💼 Executive Summary: The Problem, The Shift, The Imperative

The Problem: The SaaS Tax is killing enterprise AI infrastructure at scale.

In 2024, the Modern Data Stack was defined by speed of deployment credit card SaaS tools like Zapier, OpenAI, and Pinecone. By 2026, this convenience has become critical technical debt. Companies relying on rented API layers have discovered that their enterprise AI infrastructure is fragile, expensive, and non-compliant with emerging data residency law the EU AI Act and DORA. The Black Box model, where your business logic lives on someone else’s server, has become an operational liability at the board level.

The Shift: Sovereignty as a competitive advantage.

The market is pivoting from Rented Intelligence to Sovereign Infrastructure. This is not a return to bare-metal server racks of the 1990s. It is a move toward Self-Hosted PaaS Platform as a Service on your own terms. Tools like Coolify for orchestration, n8n for logic, and Qdrant for memory allow technical teams to deploy enterprise AI infrastructure on their own Virtual Private Clouds for 10% of the cost of equivalent SaaS bundles. The result is sub-10ms latency, total data privacy, and fixed-cost scaling that does not punish you for growing.

The Imperative: Own the brain or rent your future.

For CTOs, the choice is no longer Build vs. Buy. It is Rent vs. Own. If your AI agents rely on Zapier hooks and OpenAI wrappers, you do not own your product. You rent your capabilities and your vendor can change the pricing, the terms, or the availability at any time. This document architects the Sovereign Stack: a fully controllable, private, and scalable enterprise AI infrastructure designed for the next decade of autonomous operations.

4.Introduction: Your Enterprise AI Infrastructure Is Built on Rented Land

It is 2:00 PM. Your sales team’s AI agent just processed a lead. It drafted an email, checked the CRM, and scheduled a demo.

But where did that thought happen?

If you are running the standard 2024 stack, that thought was fragmented across four different US East servers owned by three different public companies. The memory of that customer interaction lives in a Pinecone index you do not control. The logic executed on a Zapier server shared with 50,000 other tenants. The inference ran on an OpenAI cluster that may be using your data to train its next model. Your client’s PII traveled across four jurisdictions before a single word of that email was drafted.

You have built your enterprise AI infrastructure on rented land. And the landlord can raise the rent at any time.

To stabilize your enterprise AI infrastructure you must eliminate these dependencies. Latency penalties of 400ms or more per API hop kill the real-time feel of voice agents. Data leaks in shared multi-tenant vector spaces are a board-level compliance risk in 2026. And the bills $3,500 per month for Zapier Enterprise, $800 for Pinecone, $1,200 for Vercel are bleeding operational budgets dry for capabilities that cost $65 on your own hardware.

We are RankSquire. We do not build bots. We architect Sovereign Systems. This is the complete blueprint for taking your enterprise AI infrastructure back.

Table of Contents

5.The Failure Mode: Why the Rented Stack Breaks at Scale

Before architecting the solution, we must autopsy the failure. This is the SaaS Sprawl Failure Mode that collapses enterprise AI infrastructure in production.

Failure 1: The Latency Chain (The Speed of Light Problem)

In a rented stack, every agent decision is an HTTP request crossing the public internet.

Rented Stack execution path: User Request → Zapier Webhook (200ms) → OpenAI API (800ms) → Pinecone Lookup (300ms) → Zapier Response (200ms).

Total round-trip latency: 1.5 to 2.0 seconds per decision.

This is acceptable for email automation. It is catastrophic for enterprise AI infrastructure powering voice agents or real-time fraud detection. When a customer speaks to a voice agent, they expect a response in under 500ms. The physics of the public internet make this impossible on a rented stack. You are not paying for bad engineering you are paying for geography.

Failure 2: The Privacy Void (The Compliance Trap)

Sending PII to a public vector database or a proprietary LLM API is a compliance emergency in 2026, not a risk to manage later.

The EU AI Act and DORA now require enterprise AI infrastructure operators to demonstrate exactly where customer data is stored and processed down to the physical disk. You cannot satisfy this requirement with a SaaS provider. It’s in the cloud, is not a compliant answer to a GDPR audit. “It’s on our Hetzner AX102 in Frankfurt” is.

If you store customer embeddings in a multi-tenant cloud vector database, you are trusting logical separation not physical isolation to protect your clients’ data. That is a legal exposure, not an architecture.

Failure 3: The Cost Wall (The Scale Penalty)

SaaS pricing is designed to punish success. It extracts value based on usage rather than compute.

Zapier charges per task. A complex agentic loop that checks memory, reasons, selects a tool, and executes can consume 50 tasks per workflow execution. At 10,000 executions per month a modest volume for any serious enterprise AI infrastructure you are paying thousands of dollars for CPU cycles that cost cents on your own hardware.

Pinecone charges for read units. As your enterprise AI infrastructure scales and your vector memory grows with every client interaction, your read costs increase linearly. You are penalized for having more data. You are punished for success.

The verdict: The Rented Stack is excellent for prototypes. It is financially and legally untenable for production enterprise AI infrastructure at scale. prototypes and terrible for production enterprise AI infrastructure.

6. The Architecture: The 2026 Sovereign Stack for Enterprise AI Infrastructure

The 2026 Sovereign Stack is composed of five distinct layers. Each layer of your enterprise AI infrastructure must be owned, not rented. This architecture prioritizes sub-10ms internal latency, absolute data sovereignty, and fixed operational costs regardless of execution volume.

Layer 1:The Foundation (Compute and Orchestration)

The Tool: Coolify running on Hetzner AX102 dedicated hardware.

Coolify is the self-hosted PaaS that eliminates the DevOps tax. For too long, technical teams paid a 500% markup to Vercel or Heroku to avoid writing a Dockerfile. Coolify solves this with a Vercel-like deployment dashboard running on your own server one-click deployments, automatic SSL via Traefik, real-time resource monitoring, and Git-based deployments, all without touching Kubernetes.

On a Hetzner AX102 AMD Ryzen 9, 128GB RAM, NVMe storage you have more raw compute than most enterprise AI infrastructure teams will ever need at this stage of growth.

The Economics: A Hetzner AX102 costs $65 per month. The equivalent managed infrastructure on Vercel Enterprise costs $1,200 per month or more. You are not paying for better performance on Vercel. You are paying for their margin.

Sovereignty Score: 10/10. You own the metal. No noisy neighbors. No usage-based pricing. No vendor control over your deployment environment.

Layer 2: The Nervous System (Logic and State)

The Tool: n8n self-hosted in Queue Mode with Redis.

n8n is the most misunderstood tool in the enterprise AI infrastructure stack. Most CTOs dismiss it as another automation tool. This is a mistake made by people who have only used Zapier.

n8n is a workflow orchestration engine with code-native power behind a visual interface. Every data object is a JSON structure you can inspect, transform, and manipulate with full JavaScript. Every workflow is a JSON file you can commit to Git the only automation platform where you can version-control your business logic and apply proper SDLC practices to your enterprise AI infrastructure.

In Queue Mode with Redis as the message broker, n8n scales horizontally. You spin up worker containers on the same Coolify instance to handle 1 million webhooks per hour. Zapier will time out. n8n will process the queue.

Sovereignty Score: 10/10. You control the execution environment, the logs, the retry logic, the business rules, and the Git history. No black box. No shared tenancy.

Layer 3: The Memory (Vector Storage)

The Tool: Qdrant running as a Docker container on the same Coolify instance.

This is the memory layer of your enterprise AI infrastructure long-term episodic storage that allows agents to remember user preferences, past conversations, client history, and document context across every interaction.

Why not Pinecone? Pinecone is an excellent managed product. But it is a black box at a distance a public API endpoint with shared tenancy, usage-based billing, and no physical data residency guarantee.

Qdrant is open source, written in Rust for exceptional memory efficiency, and runs as a single Docker container on your existing hardware. By running Qdrant on the same server as n8n, vector retrieval latency drops from 300ms over the public internet to under 5ms on the local Docker network. Your enterprise AI infrastructure does not cross a WAN to think.

Qdrant is used in production at X (formerly Twitter), Bosch, and high-frequency trading firms. It is not hobbyist software. It is battle-tested at exactly the scale that enterprise AI infrastructure demands.

Sovereignty Score: 10/10. Your vectors, your metadata, your physical disk, your jurisdiction.

Layer 4: The Intelligence (Hybrid Inference)

The Tool: Ollama or vLLM for local inference, OpenRouter for private API access to frontier models.

The intelligence layer is where most enterprise AI infrastructure architects make an expensive mistake routing everything through GPT-4. This is the equivalent of using a Formula 1 engine to drive to the supermarket.

The Sovereign Stack uses a Router Pattern. A small, fast local model Llama 3 8B running on Ollama classifies every incoming request. Is this a billing question? Route to the billing workflow. Is this complex strategic reasoning? Route to GPT-4 via OpenRouter. Is this a simple summarization? Run it locally at zero API cost.

The economic impact of this hybrid approach on enterprise AI infrastructure is significant. Research shows 80% of agentic tasks are classification, routing, or summarization tasks where a 8B parameter local model performs at 95% of GPT-4 accuracy at 0% of the cost. The 20% of tasks requiring frontier reasoning justify the API spend because the volume is low.

Annual savings at 100,000 executions per month: Six figures compared to routing everything through a proprietary API.

Layer 5: The Interface (Human in the Loop)

The Tool: Open WebUI or a custom React frontend deployed on Coolify.

The interface layer of your enterprise AI infrastructure is not a chatbot bubble on a third-party Slack account you do not own. It is a fully controlled dashboard where humans review, approve, and override agent decisions at critical junctures.

Sovereign enterprise AI infrastructure always keeps a human as the final arbiter of high-stakes actions contract generation, large financial transactions, public-facing communications. The agent proposes. The human approves. The system logs both. This is compliance by design, not compliance by hope.terprise AI infrastructure always keeps the human as the final arbiter of critical actions.

7. The Economics The SaaS Tax Visualized

The following analysis covers a mid-sized enterprise running 100,000 complex agentic workflows per month — a realistic volume for any serious enterprise AI infrastructure deployment.

| Feature | Rented Stack | Sovereign Stack |

|---|---|---|

| Orchestration | Zapier Enterprise: ~$3,500/mo | n8n Self-Hosted: $0 |

| Hosting | Vercel Pro/Ent: ~$1,200/mo | Hetzner Dedicated: $65/mo |

| Vector DB | Pinecone Ent: ~$800/mo | Qdrant Docker: $0 |

| Latency | ~1.5s (public internet) | <10ms (internal Docker network) |

| Data Privacy | Shared tenancy, 3rd party processors | Air-gapped capable, your VPC |

| Scalability | Linear cost — grows with usage | Fixed cost — flat until hardware cap |

| Total Monthly | ~$5,500/month | ~$65/month |

This is not a theoretical savings projection. This is a real cost differential that every CFO evaluating enterprise AI infrastructure investment needs to see before the next budget cycle.

For a deeper breakdown of orchestration costs, see our full analysis: n8n vs. Zapier for Enterprise: The Hidden Cost Analysis. This economic reality is the primary driver for the migration to sovereign enterprise AI infrastructure.

8. Real World Application: Enterprise AI Infrastructure by Vertical

This is not a design exercise. This is how the Sovereign Stack operates inside the three verticals RankSquire serves.

Real Estate: The Sovereign Lead Intelligence System

A Real Estate brokerage operating enterprise AI infrastructure on the Sovereign Stack deploys a fully self-hosted lead intelligence system. Every incoming lead from every source web forms, email, phone transcriptions hits an n8n webhook, is embedded into Qdrant, and triggers a reasoning workflow on a local Ollama model for classification. Complex follow-up strategy routes to GPT-4. The result: zero data leaves the brokerage’s VPC, total lead processing cost is under $0.001 per lead, and the system processes 10,000 leads per month for under $80 total infrastructure cost.

B2B Agencies: The Sovereign Client Intelligence Stack

A B2B Agency running enterprise AI infrastructure on the Sovereign Stack ends the SaaS sprawl that has been fragmenting their client data across Zapier, HubSpot, and third-party AI tools. All client communication emails, meeting transcripts, project updates is embedded into a self-hosted Qdrant instance. Every client interaction, every deliverable, every approval is in one searchable, retrievable, sovereign memory store. Account managers stop spending the first 20 minutes of every client call re-briefing a stateless AI on who the client is.

Financial Firms : The Sovereign Compliance Infrastructure

A Financial Firm with GDPR, DORA, and EU AI Act obligations operating enterprise AI infrastructure on the Sovereign Stack hosts everything in a Hetzner Frankfurt instance physically inside the EU, on hardware they control, with audit logs they retain for the regulatory minimum of seven years. Every compliance document, regulatory update, and client agreement is embedded in local Qdrant and retrieved by an n8n workflow in under 5ms. The legal team can answer “where is this client’s data stored?” with a physical address, a server ID, and a complete audit trail. That is what compliance looks like in 2026.

9. Deep Dive n8n as the Core of Enterprise AI Infrastructure

Most CTOs who have only seen n8n in comparison to Zapier underestimate what it can do at the center of enterprise AI infrastructure. Here is what makes n8n categorically different.

JSON Primacy and Full Transparency: n8n treats every piece of data as a JSON object you can inspect in real time. You can see exactly what data is flowing through every node of your enterprise AI infrastructure. No abstraction layer. No black box. This is essential for debugging complex agent workflows where a single malformed payload can cascade into a failed execution chain.

Queue Mode and Horizontal Scaling: In Queue Mode with Redis, n8n separates the webhook receiver from the execution workers. You can run one receiver instance and twenty worker instances on the same Coolify deployment, processing millions of workflow executions per month without a timeout or a rate limit. Zapier has a hard ceiling. n8n’s ceiling is your hardware and you can always add more hardware.

Git-Native Business Logic: Every n8n workflow exports as a JSON file. You commit it to Git. You branch it. You roll it back. You review changes in pull requests. This is the only automation platform that allows proper software engineering discipline to be applied to your enterprise AI infrastructure logic.

See cluster article: n8n Webhook Integration Examples: 10 Real Payloads for Vapi, Stripe, and HubSpot for the specific JSON configurations used in production.

10. Migration Strategy: Moving Your Enterprise AI Infrastructure to Sovereignty

Do not attempt a Big Bang migration. The transition from a rented stack to sovereign enterprise AI infrastructure is a three-phase process that eliminates risk while proving value at each stage.

Phase 1: The Parallel Pilot (Weeks 1 to 2)

Select one high-volume, low-risk workflow lead scoring is ideal. Build it in n8n on Coolify. Run it in parallel to your existing Zapier setup. Compare latency, cost, and output quality side by side. This phase proves the viability of the sovereign enterprise AI infrastructure to every skeptic on the technical team without putting production systems at risk.

Phase 2: The Memory Migration (Weeks 3 to 4)

Export your vectors from Pinecone using the Pinecone export API. Import them into your self-hosted Qdrant instance using the Qdrant snapshot and migration tools. Point your new n8n workflows at the local Qdrant endpoint. This is the phase where you will see the most dramatic performance gain latency dropping from 300ms per vector lookup to under 5ms on the local Docker network. Your enterprise AI infrastructure will feel like a different system.

Phase 3: The Logic Lift (Weeks 5 to 8)

Migrate your complex business logic workflow by workflow. Rewrite Python scripts as n8n code nodes. Consolidate your fragmented Zapier zaps into auditable n8n JSON workflows committed to Git. This is the phase that transforms your enterprise AI infrastructure from a collection of third-party dependencies into a sovereign, version-controlled, observable system you own.

See the detailed implementation walkthrough: Self-Hosting n8n on Coolify: The Complete 2026 Guide.

11. Security and Compliance: Enterprise AI Infrastructure in a Regulated World

One of the most common objections to self-hosting enterprise AI infrastructure is security. The question: Can we secure this as well as Salesforce? The answer: yes, if the architecture is correct and the regulatory compliance story is actually stronger with a sovereign stack.

The VPC Advantage: By hosting on a private VPC, your enterprise AI infrastructure is not accessible from the public internet by default. You implement VPN or Tailscale mesh networking so only authorized team members access the n8n dashboard and Qdrant management interface. Your attack surface is a fraction of a multi-tenant SaaS product serving 50,000 customers from the same endpoint.

Data Residency by Design: With the EU AI Act, DORA, and GDPR, data sovereignty is no longer a compliance checkbox it is a legal requirement with criminal liability. With sovereign enterprise AI infrastructure, you choose a Hetzner data center in Frankfurt or Helsinki. Your customer data is physically located in the EU. You can point to the rack. You can produce the audit log. You cannot do this with a SaaS provider whose “EU region” is a logical designation on a shared cluster.

Audit Trails that Last: Self-hosted enterprise AI infrastructure retains full execution logs for as long as you configure storage. Seven years for financial regulation compliance. Ten years for legal document management. SaaS providers offer 30 days of logs on standard tiers and charge enterprise pricing for longer retention. On Coolify with S3-compatible MinIO storage, retention costs fractions of a cent per gigabyte per month.

Input Validation and Prompt Injection Defense: Every webhook input to your n8n enterprise AI infrastructure should be validated against a defined schema before it reaches the reasoning layer. Block prompt injection attempts at the n8n input node before they reach Ollama or GPT-4. This is defense-in-depth that a managed SaaS platform applies on your behalf invisibly, without your control, without your audit trail.

See our dedicated compliance guide: Enterprise AI Infrastructure Compliance 2026: GDPR, EU AI Act, and DORA.

12. The Technical Stack: Your 2026 Shopping List

This is the complete sovereign enterprise AI infrastructure specification. Every component is tested in production. Every cost is verified as of February 2026.

Server: Hetzner AX102, AMD Ryzen 9, 128GB ECC RAM, NVMe SSD. $65/month. The metal that runs everything.

Operating System: Ubuntu 24.04 LTS. The stable, long-term-supported foundation for your enterprise AI infrastructure.

Platform: Coolify, installed via a single curl command. The orchestration layer that eliminates DevOps complexity.

Database: PostgreSQL 16, managed within Coolify. State storage for n8n executions and application data.

Vector Store: Qdrant, official Docker image deployed via Coolify. The memory layer of your enterprise AI infrastructure.

Automation: n8n official Docker image in Queue Mode with Redis. The nervous system. The logic layer.

Reverse Proxy: Traefik, managed automatically by Coolify. Handles SSL certificates, routing, and load balancing.

Local Inference: Ollama with Llama 3 8B for classification and routing. Zero cost per classification task.

Backup: MinIO S3-compatible object storage for Qdrant snapshots and n8n execution logs. Disaster recovery included.

Total monthly infrastructure cost: $65 to $80. That is not the prototype budget. That is the production budget for enterprise AI infrastructure that processes 100,000 complex agentic workflows per month.d for enterprise AI infrastructure in 2026. It is robust, scalable, and entirely yours.

13. The Decision Framework: Is Your Organization Ready for Sovereignty?

Not every organization should migrate to sovereign enterprise AI infrastructure on the same timeline. Use this framework to determine your readiness and your starting point.

You are running Zapier and Pinecone on credit card billing with no IT oversight: You are at the Prototype stage. You have proven the use case but you have not built infrastructure. Start Phase 1 migration immediately before your volume locks you into enterprise SaaS pricing.

You have Zapier Enterprise contracts and a DevOps engineer on the team: You are at the Transition stage. The contracts are your sunk cost. The DevOps engineer is your migration asset. Run the Parallel Pilot on n8n now. Migration your highest-cost workflows first and recapture that budget within 60 days.

You have data residency obligations under GDPR, EU AI Act, or DORA: You are at the Compliance Emergency stage. You do not have the luxury of a phased timeline. You need sovereign enterprise AI infrastructure running in a compliant jurisdiction before your next regulatory audit. Move immediately.

You have sovereign infrastructure but it is fragile and undocumented: You are at the Hardening stage. Stop adding features. Implement Git-based workflow management in n8n. Add monitoring and alerting to Coolify. Document your architecture. Then scale.

You have hardened sovereign infrastructure and are scaling agent volume: You are at the Workforce Deployment stage. Evaluate horizontal worker scaling in n8n Queue Mode. Consider a second Hetzner instance for redundancy. Begin building multi-agent coordination on top of your sovereign enterprise AI infrastructure foundation.

14. Conclusion: Stop Renting. Start Building. Own Your Enterprise AI Infrastructure.

The era of the Credit Card CTO is ending.

Building your enterprise AI infrastructure on Zapier and closed-source SaaS is not agility it is negligence. It introduces latency measured in seconds when your competitors are operating in milliseconds. It surrenders your client data to vendors who view you as a row in their database. It exposes your organization to regulatory liability that compounds with every month you remain non-compliant.

The 2026 Sovereign Stack requires more skill to set up than clicking “Connect” in a Zapier interface. It requires understanding Docker, DNS, and Linux fundamentals. But the reward is an enterprise AI infrastructure that you own an operational asset, not an operational dependency. Faster. Cheaper. Compliant by design. Yours to scale, yours to audit, yours to hand to the next CTO without re-negotiating a single vendor contract.

The system is the leverage. Own the system.

Stop renting. Start building. Command your infrastructure. Command your market.

15. Frequently Asked Questions: Enterprise AI Infrastructure

What is sovereign enterprise AI infrastructure?

Sovereign enterprise AI infrastructure is a self-hosted architecture where every component of your AI stack orchestration, memory, inference, and interface runs on hardware and software you control, inside a Virtual Private Cloud you own. No shared tenancy. No vendor-controlled uptime. No usage-based pricing that punishes scale. The 2026 Sovereign Stack achieves this using Coolify on Hetzner, n8n in Queue Mode, Qdrant for vector memory, and Ollama for local inference at under $80 per month for production-grade deployment.

Is self-hosting n8n legal for enterprise use?

Yes. n8n operates under a fair-code license. It is free to use for internal business purposes. You pay only if you are reselling n8n as a product to external customers. For internal enterprise AI infrastructure automation lead processing, client intelligence, compliance retrieval, financial operations it is completely free. The self-hosted Community Edition has no feature limitations for internal use.

Do I need a full DevOps team to manage this enterprise AI infrastructure?

No. You need one competent engineer who understands Docker and Linux fundamentals. Coolify abstracts 95% of the DevOps complexity deployments, SSL, reverse proxy, resource monitoring behind a dashboard that any senior developer can operate. If your team can manage a Vercel deployment, they can manage Coolify. The difference is that Coolify runs on your server.

Is Qdrant production-ready compared to Pinecone for enterprise AI infrastructure?

Yes. Qdrant is used in production at X (formerly Twitter), Bosch, and high-frequency trading firms. Written in Rust, it delivers exceptional memory efficiency and sub-5ms query latency when self-hosted on the same network as your orchestration layer. For enterprise AI infrastructure where data residency is a compliance requirement, Qdrant’s self-hosted deployment model is the only option that satisfies GDPR and EU AI Act obligations Pinecone cannot offer physical data residency guarantees.

What is the latency difference between rented and sovereign enterprise AI infrastructure?

A rented stack Zapier, Pinecone, Vercel introduces 1.5 to 2.0 seconds of latency per agent decision cycle due to public internet routing across multiple vendor endpoints. The Sovereign Stack n8n, Qdrant, and Ollama on the same Coolify instance reduces this to under 10ms for internal network calls. For voice agents and real-time fraud detection, this is the difference between a system that works and a system that cannot be deployed.

How does sovereign enterprise AI infrastructure handle compliance with the EU AI Act and DORA?

Sovereign enterprise AI infrastructure satisfies EU AI Act and DORA requirements by design. You choose a server location in Frankfurt or Helsinki your data is physically in the EU. You retain full audit logs for as long as regulatory requirements demand. You can produce a complete record of every AI decision, every data access, and every system change to a regulatory auditor. SaaS providers offer logical EU region designation on shared infrastructure not physical data residency. In 2026, that distinction is legally material.

What happens to compliance if we use a hybrid inference approach with OpenAI?

In a hybrid enterprise AI infrastructure, only high-complexity reasoning tasks are routed to external APIs like GPT-4 via OpenRouter. Classification, summarization, and routing tasks run locally on Ollama at zero cost and zero data exposure. For the subset of tasks routed externally, ensure your OpenAI or OpenRouter agreements include data processing agreements (DPAs) that satisfy your jurisdiction’s requirements. The Sovereign Stack minimizes the volume of data leaving your VPC by handling 80% of tasks locally.

What is the realistic timeline to migrate from a rented stack to sovereign enterprise AI infrastructure?

The three-phase migration takes 6 to 8 weeks for a team with one DevOps-capable engineer. Phase 1 Parallel Pilot takes 2 weeks and proves the value without risk. Phase 2 Memory Migration takes 1 to 2 weeks and delivers the most dramatic performance gains. Phase 3 Logic Lift takes 3 to 4 weeks and depends on the complexity of existing Zapier or Make.com workflows. Organizations with compliance urgency under GDPR, EU AI Act, or DORA should compress this timeline aggressively.

14. FROM THE ARCHITECT’S DESK

I recently audited a FinTech firm in London. They were spending $12,000 per month on a Modern Stack clustered Zapier Enterprise accounts, Pinecone Enterprise for vector storage, and Vercel for frontend hosting. Their enterprise AI infrastructure was failing in production.

The latency was 4 seconds. Their voice agent was unusable. Their compliance team had raised a flag about data residency. Their CFO had flagged the $144,000 annual AI infrastructure spend to the board.

We ripped it out. We deployed a single Hetzner AX102. We installed Coolify, n8n in Queue Mode, and Qdrant in a Docker container. We moved 80% of their LLM inference to a local Ollama instance running Llama 3 8B.

The results:

Monthly cost dropped from $12,000 to $80. Latency dropped from 4 seconds to 600ms. The system processed 4 times the previous volume without a single error. Their compliance team closed the data residency flag. Their CFO eliminated the line item.

They did not need more AI. They needed better infrastructure. They needed sovereignty.

That is what enterprise AI infrastructure looks like when it is built by an Architect rather than assembled by a Hustler with a credit card.re AI. They needed better plumbing. They needed a true enterprise AI infrastructure.

The Architect’s Sovereign Stack

Every tool listed below is used in live production deployments of enterprise AI infrastructure. Not recommendations from a spec sheet — tools from the actual build.

The foundation of every sovereign enterprise AI infrastructure deployment. AMD Ryzen 9, 128GB ECC RAM, NVMe SSD — for $65 per month. The equivalent managed infrastructure on AWS or Vercel Enterprise costs $1,200 or more per month. You are not paying for better performance on AWS. You are paying for their margin. Hetzner gives you the metal. You keep the margin.

Best for: Any organization ready to stop renting compute and start owning the hardware their enterprise AI infrastructure runs on. View Server →Coolify is the self-hosted PaaS that eliminates the DevOps tax without eliminating DevOps control. One-click deployments. Automatic SSL via Traefik. Real-time resource monitoring. Git-based deployments. Everything Vercel gives you — on your own server, at your own cost, under your own control. One competent engineer can run a full sovereign enterprise AI infrastructure stack on Coolify without a dedicated DevOps team.

Best for: Technical teams who want Vercel-level deployment simplicity with Hetzner-level cost efficiency and full data sovereignty. View Tool →The core of sovereign enterprise AI infrastructure logic. n8n in Queue Mode with Redis scales horizontally to process millions of workflow executions per month without timeouts, rate limits, or per-task billing. Every workflow is a JSON file committed to Git — the only automation platform where you version-control your business logic. Built-in Python and JavaScript code nodes. Full execution logs you retain forever. The nervous system that does not forget and does not charge you per thought.

Best for: Any enterprise AI infrastructure deployment that has outgrown Zapier’s task pricing or Make.com’s execution limits. View Tool →The sovereign memory layer that gives your enterprise AI infrastructure permanent recall without Pinecone’s read-unit billing. Built in Rust. Sub-5ms vector retrieval when running on the same Docker network as n8n — compared to 300ms over the public internet to a managed cloud vector database. Used in production at X (formerly Twitter), Bosch, and high-frequency trading firms. Open source. Your VPC. Your disk. Your compliance story.

Best for: Any organization with GDPR, EU AI Act, or DORA obligations that requires physical data residency guarantees a SaaS vector database cannot provide. View Tool →The classification and routing layer of your sovereign enterprise AI infrastructure — running at zero API cost. Ollama lets you run Llama 3 8B, Mixtral, and other open-weight models locally on your Hetzner hardware. 80% of agentic tasks are classification, routing, and summarization — tasks where an 8B parameter local model performs at 95% of GPT-4 accuracy at 0% of the API cost. Stop paying frontier model pricing for supermarket-run thinking.

Best for: Architects who want to eliminate the majority of their LLM API spend without sacrificing agent reasoning quality on complex tasks. View Tool →When your enterprise AI infrastructure needs GPT-4o, Claude 3.5 Sonnet, or Gemini Ultra for high-complexity strategic reasoning — OpenRouter provides private API access with a single unified endpoint and data processing agreements suitable for regulated industries. The 20% of tasks that require frontier reasoning. The other 80% runs on Ollama at zero cost. This is how the Sovereign Stack cuts six figures from an annual AI infrastructure budget.

Best for: Organizations that need frontier model reasoning for complex tasks but want to minimize the volume of sensitive data leaving their VPC. View Tool →The relational backbone of your enterprise AI infrastructure. PostgreSQL manages n8n execution state, stores application data, and serves as the structured data layer alongside Qdrant’s vector data layer. Deployed within Coolify alongside every other component — one server, one backup, one operational overhead. The most battle-tested open-source database in existence. There is no reason to rent what PostgreSQL provides for free.

Best for: Every Sovereign Stack deployment. PostgreSQL is not optional — it is the state layer every other component depends on. View Tool →S3-compatible object storage running on your own hardware. MinIO stores Qdrant vector snapshots for instant recovery, n8n execution logs for regulatory audit retention, and any binary files your enterprise AI infrastructure needs to process. At fractions of a cent per gigabyte per month, retaining 7 years of audit logs for GDPR and financial regulation compliance costs almost nothing. AWS S3 charges you per request, per GB, and per data transfer. MinIO charges you only for the disk you already own.

Best for: Any organization with regulatory log retention requirements or disaster recovery obligations for their enterprise AI infrastructure. View Tool →Stop being a Hustler.

Become the Architect.

No demos. No templates. No misconfigured Docker containers at 2 AM. Just sovereign infrastructure that works.

You have just read the complete Sovereign Stack blueprint. You understand the five layers — Compute, Logic, Memory, Intelligence, Interface. You know the economics. You have seen the case study. The question is no longer whether sovereign enterprise AI infrastructure works. The question is whether you are going to attempt the migration yourself over the next three months — or have it architected, deployed, and handed over in 30 days.

Whether you are running a Real Estate operation, a B2B Agency, or a Financial Firm with GDPR obligations — every Sovereign Stack I build is custom-designed around your specific workflows, your compliance requirements, and your operational budget. Not a template deployment. A bespoke sovereign enterprise AI infrastructure built to run your business for the next decade.

- Hetzner dedicated server provisioned, hardened, and Coolify-deployed in your jurisdiction

- n8n in Queue Mode with Redis — your business logic migrated from Zapier or Make.com

- Qdrant deployed and populated — vectors migrated from Pinecone, memory stack operational

- Ollama + OpenRouter hybrid inference layer configured and cost-optimized

- PostgreSQL, Traefik, MinIO backup — full sovereign enterprise AI infrastructure operational

- Full handover documentation, Git repository, and 30 days of post-deployment support

Don’t Just Read the Blueprint.

Build the Fortress.

Reading about sovereignty is not sovereignty.

Every day your enterprise AI infrastructure runs on Zapier, Pinecone, and Vercel

is another day you are paying the SaaS Tax — in capital, in latency,

and in compliance exposure you cannot see on a dashboard.

The Architect does not rent. The Architect owns.

Latency: 4 seconds → 600ms.

Volume capacity: 4× increase. Zero errors.

Compliance flag: closed. CFO budget line: eliminated.

Timeline: 30 days.

We architect sovereign enterprise AI infrastructure for Real Estate operations, B2B Agencies, and Financial Firms with regulatory obligations. Not SaaS wrappers. Not template deployments. Bespoke sovereign systems built on hardware you own, in jurisdictions you control, with audit trails you keep forever.

- Sovereign Hetzner infrastructure provisioned in your chosen jurisdiction

- Coolify + n8n Queue Mode + Qdrant + Ollama — fully operational in 30 days

- Complete migration from your existing Zapier, Make.com, and Pinecone stack

- EU AI Act and GDPR compliance by design — not by hope

- Full Git handover — your business logic, version-controlled and yours

Comments 6