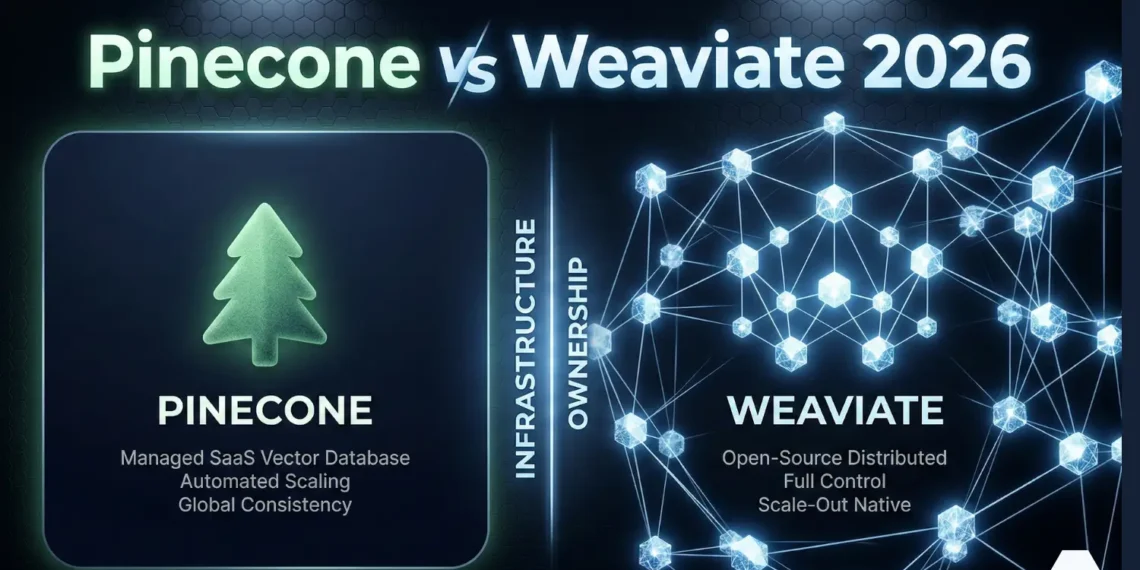

Pinecone is a fully managed, closed-source vector database delivered as cloud SaaS with serverless and dedicated deployment modes, designed for teams that require zero infrastructure management and production-grade retrieval at enterprise scale.

Weaviate is an open-source, AI-native vector database built in Go that supports self-hosted, bring-your-own-cloud, and managed cloud deployment, distinguished by native hybrid search combining dense vector similarity with BM25 keyword retrieval in a single API call.

Both are purpose-built for Retrieval-Augmented Generation (RAG) pipelines, semantic search, and AI agent memory architectures differing primarily in infrastructure ownership model, hybrid search implementation, and multi-tenancy design.

As of January 2026, Pinecone operates on a serverless object-storage architecture with Dedicated Read Nodes (DRN) for sustained throughput; Weaviate 1.34 operates on HNSW + inverted index with hot/warm/cold tenant storage tiering.

💼 Executive Summary

Definition: Pinecone vs Weaviate is not a binary performance question. It is an infrastructure ownership question. Both databases deliver production-grade vector retrieval. The architectural divergence begins the moment your requirements extend beyond pure vector similarity search into hybrid retrieval, compliance isolation, multi-tenant data separation, and sovereign deployment.

Problem: Engineering teams evaluating these two databases under time pressure default to surface comparisons latency numbers and pricing tiers and ignore the architectural constraints that will determine their migration cost at 50 million vectors. The wrong choice at prototype stage becomes a re-architecture at production scale.

Solution: This guide evaluates Pinecone and Weaviate across six decision-critical dimensions: architecture model, scaling mechanism, hybrid search implementation, pricing at three operational scales, production reliability, and use-case verdict by deployment profile. Each dimension produces a binary verdict. No ambiguity.

Key Takeaway: 2026 Infrastructure Law: Teams that need to retrieve across meaning and exact terminology in one query will find Weaviate’s native hybrid search architecturally cleaner. Teams that need zero infrastructure management with guaranteed SLA at billion-vector scale will find Pinecone’s serverless and Dedicated Read Node architecture superior. Choose the infrastructure model that matches your compliance posture not the one with the lower entry price.

1. Introduction: Two Databases, Two Infrastructure Philosophies

You have narrowed your vector database evaluation to two candidates. That decision already tells the Architect something about where you are building: past prototyping, past generic research, and into the territory where infrastructure choices produce architectural lock-in or architectural freedom.

The comparison of Pinecone vs Weaviate is not a performance race. At production scale, both databases return query results in sub-100ms. The race ends at the infrastructure model. Pinecone was engineered for teams that treat the database as a service an API endpoint that accepts vectors, stores them, and returns ranked results without a single DevOps decision. Weaviate was engineered for teams that treat the database as infrastructure a component that lives inside their architecture, governed by their compliance team, deployed in their VPC, and owned by their engineering organization.

Table of Contents

That philosophical divide managed SaaS versus open core is the lens through which every dimension of this comparison resolves. Architecture differences are not arbitrary. They are the downstream consequence of two engineering organizations solving the same problem from opposite infrastructure assumptions.

2. The Failure Mode: What Goes Wrong When You Choose Wrong

Teams that select Pinecone for compliance-restricted environments discover, at production readiness review, that their vector data sits in a third-party managed cloud by default outside the perimeter that their legal and security teams approved. Migrating 50 million vectors out of Pinecone’s serverless indexes to a self-hosted Weaviate instance under a 30-day deadline is an engineering crisis, not an engineering project.

Teams that select Weaviate for zero-ops agencies discover that ‘self-hosted’ means someone on the team owns cluster configuration, HNSW parameter tuning, backup schedules, and incident response. For a 3-person agency building client automation workflows, that ownership cost is a non-trivial tax on every sprint.

The three failure vectors that produce the most re-architecture work:

Choosing Pinecone when the use case requires simultaneous keyword and semantic retrieval then discovering that Pinecone’s sparse-dense hybrid architecture requires a separate sparse encoding pipeline and incurs additional storage costs per sparse vector. Teams running legal document retrieval, e-commerce product search, or compliance clause extraction with exact terminology requirements consistently encounter this ceiling.

Selecting Weaviate Shared Cloud without understanding that HIPAA compliance on Weaviate is only available on Enterprise Cloud on AWS as of January 2026, with Azure and GCP support in active development. Healthcare and financial services teams that begin on Shared Cloud and later face audit requirements must re-provision and migrate.

Pinecone Standard bills $0.33/GB storage and $16 per million read units where read units are counted per vector scanned during query execution, not per query. At 100 million vectors with high query throughput, this per-scan billing model compounds rapidly. Teams that did not model at-scale cost before committing to managed service architecture encounter this at the worst possible moment.

3. Methodology: How This Comparison Was Built

Methodology Transparency

| Data Point | Source | Date Verified |

|---|---|---|

| Pinecone DRN benchmarks (135M vec, 600 QPS, P50 45ms, P99 96ms) | Pinecone official blog — DRN release notes | December 2025 |

| Pinecone 1.4B vector benchmark (5,700 QPS, P99 60ms) | Pinecone customer benchmark — official publication | December 2025 |

| Pinecone pricing (Standard $50/mo min, $0.33/GB, $16/M read units) | pinecone.io/pricing — official pricing page | October 2025 |

| Pinecone Enterprise pricing ($500/mo min, HIPAA attestation) | pinecone.io/pricing — official pricing page | October 2025 |

| Pinecone BYOC general availability (AWS, Azure, GCP) | Pinecone official release notes | 2024 GA |

| Weaviate v1.34 features (flat index, RQ, server-side batching) | weaviate.io/blog — release notes | Early 2026 |

| Weaviate Shared Cloud pricing (from $25/mo, 99.5% SLA) | weaviate.io/pricing — official pricing page | October 2025 |

| Weaviate HIPAA on AWS Enterprise Cloud | weaviate.io/security — compliance page | 2025 |

| Embedding assumption: text-embedding-3-small, 1536-dim | OpenAI official docs | January 2026 |

| HNSW default parameters: ef=128, M=16 (standard production config) | Weaviate + Pinecone documentation | January 2026 |

External Authority References

| Resource | URL | Purpose |

|---|---|---|

| Pinecone Pricing | pinecone.io/pricing | Pricing tiers, read unit billing, BYOC details |

| Pinecone DRN Release | pinecone.io/blog/dedicated-read-replicas | DRN benchmarks — 135M and 1.4B vector tests |

| Pinecone Docs | docs.pinecone.io | Architecture, index types, hybrid search implementation |

| Weaviate Pricing | weaviate.io/pricing | Shared Cloud, Dedicated Cloud, BYOC pricing |

| Weaviate Security | weaviate.io/security | SOC 2, HIPAA, GDPR compliance documentation |

| Weaviate v1.34 Release | weaviate.io/blog/weaviate-1-34-release | Feature verification — flat index, RQ, batching |

| Weaviate GitHub | github.com/weaviate/weaviate | Open-source verification, license, star count |

| OpenAI Embeddings | platform.openai.com/docs/guides/embeddings | Embedding model specs, pricing, dimension counts |

4. Architecture: The Infrastructure Ownership Divide

Pinecone: Managed SaaS Architecture

Pinecone operates on a serverless architecture with object storage at its foundation. The separation of reads, writes, and storage enables independent scaling of each resource layer. Compute scales automatically with query load. Storage scales independently with vector count. This eliminates pod sizing calculations a significant operational burden in earlier vector database deployments.

The Dedicated Read Nodes (DRN) layer released in public preview December 2025 adds provisioned read capacity for workloads requiring predictable low-latency performance at sustained throughput. Verified benchmarks from the official DRN release: 135 million vectors at 600 QPS with P50 45ms and P99 96ms. Load-tested to 2,200 QPS with P50 60ms and P99 99ms. A published customer benchmark on 1.4 billion vectors achieved 5,700 QPS at P50 26ms and P99 60ms.

Weaviate: Open Core Architecture

Weaviate is built in Go and deployed as a single-node or distributed cluster. The schema is class-based each object stores both its properties and its vector representation together, enabling joint filtering and retrieval without secondary lookups. The architecture treats the inverted index (BM25) and the HNSW vector index as first-class citizens of the same query engine not as separate systems stitched together.

Deployment options span the full infrastructure spectrum: local Docker for development, self-hosted on any Kubernetes cluster or VPS, Weaviate Shared Cloud (multi-tenant managed), Weaviate Dedicated Cloud (single-tenant managed), and BYOC where Weaviate manages the database inside your cloud account. Weaviate version 1.34 current as of early 2026 added flat index support with Rotational Quantization, server-side batching, and new C# and Java client libraries. Version 1.32 introduced collection aliases for zero-downtime migrations.

Architecture Comparison Table

| Dimension | Pinecone | Weaviate |

|---|---|---|

| Foundation | Closed-source managed SaaS | Open-source (BSD-3 license) |

| Build Language | Proprietary (Rust-optimized core) | Go |

| Index Types | HNSW + proprietary blob storage | HNSW, flat, dynamic, quantized (RQ) |

| Storage Model | Object storage (serverless) | Local disk + optional S3 offload |

| Deployment Modes | Serverless, Dedicated, BYOC (AWS/Azure/GCP) | Local, self-hosted K8s/VPS, Shared Cloud, Dedicated, BYOC |

| Air-Gap / Bare Metal | Not supported | Supported (self-hosted) |

| GraphQL API | No | Yes — native GraphQL + REST + gRPC |

| Auto-Vectorization | No — bring your own embeddings | Yes — integrated vectorizers at import |

| Multi-tenancy Model | Namespace-based logical isolation | Native shard-per-tenant with storage tiering |

5. Scaling Model: Serverless vs Cluster Orchestration

How Pinecone Scales

Pinecone's serverless architecture handles scaling without operator intervention. Write capacity, read capacity, and storage each scale independently. For workloads with unpredictable traffic spikes, this is architecturally superior to any provisioned-capacity model the system absorbs traffic without manual replication adjustments. The on-demand pricing reflects this: you pay per operation consumed, not per server provisioned.

For sustained high-throughput workloads, the Dedicated Read Node tier provides provisioned read capacity reserved nodes per index with no shared-queue contention. Scaling on DRN is explicit: add replicas for throughput, add shards for storage capacity. No auto-scaling ambiguity.

How Weaviate Scales

Weaviate scales through cluster expansion on Kubernetes. Horizontal scaling distributes shards across nodes. For multi-tenant architectures, Weaviate's native multi-tenancy enables tenant-level lifecycle management: active tenants in hot storage (RAM-backed), inactive tenants offloaded to warm or cold storage (AWS S3), reactivated automatically on query arrival.

This tiered storage architecture changes the economics of large-scale multi-tenant deployments materially. A SaaS platform with 80,000 tenants where 80% are inactive during off-peak hours can offload inactive tenants to cold storage automatically reducing memory requirements without changing the API surface for active users.

6. Hybrid Search: The Critical Architectural Difference

This is the dimension where architectural divergence between Pinecone and Weaviate is most operationally significant. Both databases support hybrid search. The implementation model is materially different and the difference affects ingestion pipeline complexity, storage cost, and retrieval precision.

Weaviate Hybrid Search: Native BM25 + Dense in One Query

Weaviate maintains an inverted index alongside its HNSW vector index as a core architectural component not a secondary system. When you execute a hybrid query, Weaviate runs BM25 keyword search and dense vector search simultaneously within the same process, then fuses results using Reciprocal Rank Fusion (RRF) or relativeScoreFusion.

RRF merges result sets by rank position: each document's fused score is calculated as the sum of 1/(k + rank) across both result lists, where k is a constant (typically 60). This rank-based fusion is position-agnostic it does not require score normalization between the two indexes. Weaviate's relativeScoreFusion alternative preserves original score magnitudes before fusion, which performs better when score distributions between the two indexes are significantly different.

The alpha parameter controls the balance: alpha=1.0 executes pure dense vector search, alpha=0.0 executes pure BM25, intermediate values blend the two. No additional storage cost for the keyword index it is a native component of the collection schema.

Pinecone Hybrid Search: Sparse-Dense Architecture

Pinecone's hybrid search uses sparse vectors high-dimensional vectors that consist mostly of zeros, typically 30,000 to 100,000 dimensions, where each non-zero dimension corresponds to a vocabulary token. These are generated by SPLADE (Sparse Lexical and Expansion Model), originally developed at NAVER Labs, or BM25-based encoders such as Pinecone's hosted pinecone-sparse-english-v0 model.

SPLADE-generated sparse vectors encode not just the terms present in a document but also semantically related terms through learned expansion making them more powerful than traditional BM25 bags-of-words. The tradeoff is the encoding step: sparse vectors must be generated before ingestion via an external model call or Pinecone's hosted sparse model. Pinecone bills sparse vectors as additional storage separate from dense vector storage because the 30k–100k dimension sparse representation occupies different index infrastructure than the dense HNSW index.

Hybrid Search Architecture Comparison

| Dimension | Pinecone | Weaviate |

|---|---|---|

| Keyword Search Method | Sparse vectors (SPLADE / BM25 encoders, 30k–100k dim) | Native BM25 inverted index |

| Architecture | Sparse-dense combined index | Native joint query engine |

| Fusion Method | Score weighting (alpha parameter) | RRF (rank-based) or relativeScoreFusion (score-based) |

| Extra Storage Cost | Yes — sparse vectors billed as separate storage | No — inverted index is native to collection schema |

| Ingestion Overhead | Sparse encoding step required before upsert | Automatic via integrated vectorizer |

| SPLADE Expansion | Yes — learned term expansion via SPLADE | BM25 only — no learned expansion |

| Single API Call | Yes — single hybrid index query | Yes — native hybrid in one query |

| Keyword-Only Queries | Requires sparse-only index | Native BM25 query — no additional index |

7. Economics: Pricing Simulation at Scale

Pricing at scale is an architectural decision. Numbers below are derived from verified official pricing sources updated October–December 2025. Actual costs vary with query volume, dimension count, compression settings, and region.

Pinecone Billing Model: How Read Units Work

Pinecone's read unit billing is a critical detail that most cost comparisons omit: read units are charged per vector scanned during query execution not per query submitted. A query against an index of 10 million vectors that scans 50,000 candidates before returning top-10 results consumes 50,000 read units. At $16 per million read units on the Standard plan, a high-throughput workload with large candidate scans will generate a materially higher bill than the per-query cost alone suggests.

- Starter (Free): 2GB storage, 2M write units/month, 1M read units/month. AWS us-east-1 only. Max 5 indexes. No production SLA.

- Standard: $50/month minimum. $0.33/GB/month storage. $4/million write units. $16/million read units billed per vector scanned. All regions on AWS, Azure, GCP.

- Enterprise: $500/month minimum. $6/million write units. $24/million read units. HIPAA attestation, private networking, customer-managed encryption keys, audit logs.

- BYOC: Custom pricing. Pinecone infrastructure runs inside your cloud account. No Pinecone SSH/VPN access required.

Weaviate Billing Model: Dimension-Based Storage

Weaviate Shared Cloud pricing is based on the number of vector dimensions stored not the number of vectors. The formula: total_dimensions = vector_count × dimension_size. At 1 million vectors with 1,536-dimension embeddings (text-embedding-3-small), total stored dimensions = 1.536 billion. Weaviate's pricing applies a per-dimension-per-month rate at the applicable tier. Compression via Product Quantization (PQ) or Binary Quantization (BQ) reduces stored dimensions by 4x to 32x materially reducing the billable unit count at scale.

- Free Sandbox: Shared cluster, full hybrid search toolkit, community support. No SLA. Prototyping only.

- Shared Cloud (Pay-as-you-go): From $25/month. Dimension-based billing. 99.5% uptime SLA. Next-business-day Severity 1 response.

- Dedicated Cloud: Contact sales. Single-tenant dedicated resources. HIPAA on AWS. 1-hour Severity 1 response. Hot/warm/cold tenant storage tiers.

- BYOC: Contact sales. Weaviate manages deployment inside your AWS, GCP, or Azure account.

Pricing Simulation: Three Operational Scales

| Scale | Pinecone Estimated Cost | Weaviate Estimated Cost | Notes |

|---|---|---|---|

| 1M vectors (1536-dim) | $50/mo (Standard minimum) | $25–$50/mo (Shared Cloud, no compression) | Similar entry cost. Weaviate lower with PQ/BQ compression enabled. |

| 10M vectors (1536-dim) | $80–$200/mo (Standard, moderate-high QPS) | $100–$200/mo (Shared Cloud, no compression) | Pinecone advantage on high-QPS. Weaviate lower on low-QPS or with compression. |

| 100M vectors (1536-dim) | $500–$1,500+/mo (Standard/Enterprise) | Dedicated Cloud — contact sales | Weaviate self-hosted eliminates managed cost entirely at this scale. |

| 100M+ self-hosted | Not supported — SaaS only | $0 software + infrastructure only | Weaviate OSS on your VPS or K8s eliminates all per-vector managed fees. |

| Compression impact | Not applicable (managed) | PQ: ~4x reduction. BQ: ~32x reduction in stored dimensions | BQ reduces Weaviate dimension billing by up to 97% with minor recall tradeoff. |

8. Production Reliability: SLA, Multi-Region, Compliance

Service Level Agreements

| Dimension | Pinecone | Weaviate |

|---|---|---|

| Uptime SLA (Paid) | Enterprise-grade (contact for exact figures) | 99.5% on Shared Cloud; enhanced on Dedicated |

| Severity 1 Response | Enterprise plan — contact for SLA terms | Next-business-day (Shared); 1-hour (Dedicated) |

| Backup / Restore | API-based backup/restore (Standard+, public preview 2025) | Tenant offload to S3 + cluster-level backups |

| Data Export | Limited — egress costs apply | Full export on self-hosted; egress on managed |

Multi-Region Support

- Pinecone: Available on AWS, Azure, and GCP across all regions on Standard and Enterprise plans. Starter plan restricted to AWS us-east-1 only. Multi-region replication available on Enterprise.

- Weaviate: Shared Cloud regional availability expanding. Dedicated Cloud supports AWS, GCP, Azure. Self-hosted: any region, any infrastructure including on-premises and air-gapped environments.

9. Compliance Posture: Verified January 2026

| Compliance Standard | Pinecone | Weaviate |

|---|---|---|

| SOC 2 Type II | ✓ Certified | ✓ Certified (managed offering) |

| ISO 27001 | ✓ Certified | Not publicly listed |

| GDPR | ✓ Aligned — DPA available | ✓ Supported — EU self-hosted option |

| HIPAA | ✓ Enterprise plan — attestation letter available | ✓ Enterprise Cloud on AWS only (2025). Azure/GCP in development. |

| Data Residency | BYOC — runs in your cloud account | Self-hosted anywhere + BYOC in your cloud account |

| Air-Gap / Offline | Not supported under any plan | Fully supported (self-hosted deployment) |

| Control Plane Access | Pinecone operates control plane on BYOC | Self-hosted: full control plane ownership by customer |

10. Decision Framework: Binary Verdicts by Deployment Profile

This is not a 'best overall' determination the pillar covers that ground. This is a binary verdict for the six deployment profiles most likely to be evaluating Pinecone versus Weaviate specifically.

| Deployment Profile | Binary Verdict | Architectural Rationale |

|---|---|---|

| 1. Zero-Ops Enterprise Scale High QPS, no infrastructure team |

Pinecone | Serverless architecture and Dedicated Read Nodes (DRN) completely abstract scaling complexity. You pay a premium to never touch infrastructure. |

| 2. Native Hybrid Search Semantic + exact keyword matching |

Weaviate | Native BM25 inverted index combined with HNSW vector search in a single engine. Avoids Pinecone's SPLADE sparse-encoding pipeline overhead. |

| 3. Strict Data Sovereignty Air-gapped, on-premise, HIPAA |

Weaviate | Open-source foundation allows full self-hosting on bare metal or air-gapped clusters. Pinecone SaaS cannot operate outside connected cloud regions. |

| 4. B2B Multi-Tenant SaaS Isolated customer data, strict compliance |

Weaviate | Native multi-tenancy with shard-per-tenant isolation and hot/warm/cold storage tiering provides superior resource control over Pinecone's logical namespaces. |

| 5. Pure Vector Retrieval No keywords, pure embedding similarity |

Pinecone | Highly optimized proprietary vector search core delivers massive absolute throughput and lower latency at the P99 percentile for dense-only queries. |

| 6. Budget-Constrained Massive Scale 100M+ vectors on a startup budget |

Weaviate | Self-hosting the open-source version eliminates SaaS per-vector and read-unit billing, reducing costs to raw compute/storage. Product quantization (BQ) lowers RAM needs. |

11. Scenario Simulations: Where Each Architecture Breaks

Scenario 1: Legal AI Platform: Contract Clause Retrieval

An AI platform for legal teams needs to retrieve contract clauses that match both the conceptual meaning of a query ('termination rights in case of material breach') and exact legal terminology ('Material Adverse Change clause', 'MAC provision'). The system serves 200 law firms, each with isolated document stores requiring HIPAA-grade compliance.

Weaviate is the correct architecture. Native hybrid search handles simultaneous semantic and keyword retrieval in one query. Native multi-tenancy isolates each law firm's documents without secondary partitioning. HIPAA compliance on Dedicated Cloud covers health-related legal matters. Pinecone would require: sparse encoding pipeline, additional hybrid index cost, namespace-based tenant separation (less isolated than Weaviate native multi-tenancy), and Enterprise plan for HIPAA compounding both cost and complexity.

Scenario 2: AI Sales Agent: High-Volume Outbound Platform

A B2B outbound platform runs AI agents for 50 agency clients. Each agent executes 10,000 vector queries per day across prospect, email, and meeting memory. Total daily query volume: 500,000 reads. Data per client: under 1 million vectors. Infrastructure team: zero. The founder manages the entire stack.

Pinecone is the correct architecture. Serverless billing absorbs variable query load without pod provisioning decisions. The full memory stack becomes operational with an API key and under 30 minutes of n8n workflow configuration. At under 1 million vectors per client, Standard plan cost is predictable and significantly below the threshold where self-hosted Weaviate economics become compelling. Self-hosted Weaviate adds cluster management overhead that a zero-ops team cannot absorb without dedicated engineering time.

Scenario 3: Healthcare AI: Sovereign Infrastructure Requirement

A healthcare AI company building a clinical documentation assistant must keep all patient data within their AWS VPC. Their compliance team has approved no third-party managed services for PHI. Two senior engineers with Kubernetes experience are available.

Weaviate self-hosted or BYOC is the only architecturally compliant path. Pinecone BYOC runs within the customer's cloud account but Pinecone's engineering team still operates the control plane. For organizations where the compliance requirement is that no external operator has any access pathway to the data environment, Weaviate self-hosted with RBAC and audit logging is the legally defensible choice. The control plane ownership distinction is the deciding factor not latency, not feature parity.

12. Conclusion: Infrastructure Ownership Is the Decision

Pinecone and Weaviate are not competing on performance benchmarks at this stage of the market. Both deliver sub-100ms retrieval in production. Both support hybrid search. Both carry enterprise compliance certifications. The performance gap between them at moderate scale is architecturally irrelevant for the majority of production use cases.

The decision resolves on two axes: infrastructure ownership and retrieval architecture. If your organization's security posture, compliance requirements, or cost model at scale demands that you own the infrastructure your vectors live on Weaviate is the only candidate in this comparison. If your organization needs production-grade vector retrieval operational before your next sprint demo, without a single DevOps decision Pinecone is the only candidate.

Hybrid search is the secondary differentiator. If exact-term retrieval and semantic retrieval must execute in the same query without additional pipeline complexity Weaviate's native BM25 integration is architecturally superior for that specific requirement.

"Stop evaluating features. Evaluate infrastructure ownership. That decision settles this comparison permanently."

13. Frequently Asked Questions: Pinecone vs Weaviate 2026

What is the core architectural difference between Pinecone and Weaviate?

Pinecone is a closed-source, fully managed SaaS that separates compute, read capacity, and storage into independently scaling layers operated entirely by Pinecone's engineering team. Weaviate is an open-source database built in Go that co-locates its HNSW vector index and BM25 inverted index within the same query engine, deployable on any infrastructure from local Docker to air-gapped bare metal. The defining difference is infrastructure ownership: Pinecone always involves a third-party operator. Weaviate self-hosted does not. As of January 2026, this distinction is the primary decision variable for regulated industries and compliance-gated environments.

Is Pinecone faster than Weaviate in 2026?

At very high throughput and billion-vector scale, Pinecone's Dedicated Read Nodes architecture produces verified benchmarks that Weaviate's managed offering does not match 5,700 QPS at P99 60ms on 1.4 billion vectors, per Pinecone's official December 2025 DRN release. At moderate scale (under 100 million vectors, under 1,000 QPS), the latency difference between the two is operationally irrelevant. Both deliver sub-100ms P99. Speed should not be the primary selection criterion unless you are designing for billion-scale throughput-critical workloads with a dedicated engineering team.

Can Weaviate replace Pinecone entirely?

For organizations that require infrastructure ownership, native hybrid search without sparse encoding overhead, multi-tenancy with storage tiering, or deployment in environments prohibiting third-party managed services Weaviate self-hosted or BYOC fully replaces Pinecone's functionality. For organizations that require zero infrastructure management, the fastest path to production retrieval, and sustained throughput at billion-vector scale on a managed service Pinecone remains the correct architecture. The replacement question resolves on infrastructure ownership posture, not feature comparison.

What is the real cost difference at 10 million vectors?

At 10 million vectors with 1,536-dimension embeddings and moderate query volume, Pinecone Standard runs approximately $80–$200 per month depending on read unit consumption per query. Weaviate Shared Cloud at the same scale runs approximately $100–$200 per month without compression. With Weaviate's Product Quantization enabled a standard production configuration the dimension billing drops by approximately 4x, reducing Weaviate's cost to $25–$60 per month at the same scale. Weaviate self-hosted at 10 million vectors on a $20–$40/month VPS eliminates all per-dimension managed fees. The cost comparison is not purely financial it is financial plus the engineering time cost of infrastructure management.

Which database handles multi-tenancy better for SaaS applications?

Weaviate's native multi-tenancy is purpose-built for SaaS deployment patterns. Each tenant is isolated with its own shard, supports independent lifecycle management active, inactive, offloaded to cold storage on AWS S3 and reactivates automatically on query arrival. Pinecone's namespace-based approach provides logical isolation but does not support tiered storage economics for inactive tenants. For platforms with thousands of tenants where the majority are inactive at any given time, Weaviate's tiered storage model produces materially lower infrastructure costs than Pinecone's always-hot namespace model.

Does Pinecone support self-hosted deployment?

Pinecone does not support on-premises or air-gapped self-hosted deployment. It is a cloud SaaS product. The BYOC option (GA 2024) deploys Pinecone's managed infrastructure inside your AWS, Azure, or GCP account providing network perimeter control and private endpoint connectivity via AWS PrivateLink, GCP Private Service Connect, or Azure Private Link. Pinecone does not require SSH, VPN, or inbound network access in BYOC mode. However, Pinecone's engineering team still operates the control plane. For organizations where the compliance requirement is full control-plane ownership with no external operator access only Weaviate self-hosted satisfies that requirement.

How does Weaviate hybrid search compare to Pinecone hybrid search technically?

Weaviate hybrid search executes BM25 keyword retrieval and HNSW dense vector retrieval simultaneously within the same process using a native inverted index, then merges results via RRF (rank-based: score = sum of 1/(k+rank)) or relativeScoreFusion (score-magnitude-preserving). No additional storage cost. No sparse encoding pipeline. Pinecone hybrid search generates sparse vectors 30,000 to 100,000 dimensions via SPLADE or BM25 encoders stored as additional billed storage alongside dense vectors. SPLADE adds learned term expansion beyond BM25, which can improve sparse retrieval quality, but requires an encoding step at ingestion. For workloads where hybrid retrieval precision is the primary requirement and pipeline simplicity matters, Weaviate's native architecture is operationally superior.

When should I migrate from Pinecone to Weaviate?

Evaluate migration when: (1) Pinecone's monthly bill consistently exceeds $300–$500 and your team has infrastructure management capacity; (2) compliance requirements emerge that mandate data residency inside your VPC or on-premises; (3) hybrid search becomes a core retrieval requirement and the sparse vector pipeline adds unacceptable ingestion overhead; (4) multi-tenant architecture reaches thousands of tenants and the cost of keeping all tenants in hot storage becomes prohibitive. Store source-of-truth embeddings in S3 or GCS before beginning migration to eliminate egress fees during transition.

What n8n integration approach works for both Pinecone and Weaviate?

n8n provides native Pinecone nodes for upsert, query, and delete operations deployable without custom code. Weaviate integration in n8n uses HTTP request nodes pointing to the Weaviate REST API or GraphQL endpoint. For self-hosted Weaviate deployments, the n8n workflow calls the Weaviate instance's local network address keeping all data within your infrastructure perimeter with zero external API dependency. For both databases, the embedding step , OpenAI text-embedding-3-small at $0.02 per million tokens via the n8n OpenAI node precedes the vector database upsert node in the workflow sequence.

From the Architect's Desk

The system worked. Until their enterprise sales team landed a hospital group. PHI in the contracts. Pinecone HIPAA attestation covers the Enterprise plan. They were on Standard. Their compliance team wanted data inside their VPC not in any third-party managed service.

The re-architecture to Weaviate BYOC on their AWS account took three months and one senior engineer at full allocation.

Disclosure: Some links below are affiliate links. If you purchase through them, RankSquire may earn a small commission — at no additional cost to you. This helps us continue producing independent, benchmark-driven infrastructure guides. We only recommend tools we have evaluated and deployed in production.

You have read the complete architecture decision. Now choose your infrastructure.

Every tool below was evaluated against real benchmarks, verified pricing, and production deployment requirements.

At RankSquire, we empower Real Estate, B2B Agencies, and Financial Firms to deploy execution-ready AI blueprints. Whether building an Autonomous ISA requiring Pinecone’s zero-ops scale, or Financial Ops requiring Weaviate’s sovereign control, the infrastructure determines the system's longevity. We bridge the gap between theoretical AI and real-world operations by engineering a digital workforce that coordinates decisions without constant oversight.

The Infrastructure Decision Kit

Every tool evaluated in this comparison — with verified pricing, deployment context, and the exact scenario each one wins.

Pinecone — Managed SaaS

Best: Zero-Ops ProductionFully managed, closed-source, serverless vector database. Sub-50ms latency at enterprise scale. Independent scaling of reads, writes, and storage. API key to production in under 30 minutes.

Best for: Agencies, startups, and outbound AI platforms that need production retrieval without managing a single server. View Pinecone →Weaviate — Open Core

Best: Hybrid Search + Sovereign InfraOpen-source (BSD-3). Native BM25 + dense vector hybrid search in a single API call. Self-hosted on Docker, Kubernetes, or any VPS. HIPAA-compliant on AWS (Enterprise Cloud, 2026).

Best for: Legal AI, compliance platforms, B2B SaaS with multi-tenant isolation, and any team that must own their infrastructure. View Weaviate →OpenAI Embeddings — text-embedding-3-small

Works with both databasesYour vector database is only as good as your embeddings. text-embedding-3-small outputs 1,536-dimension vectors at $0.02 per million tokens.

Best for: Any agent using OpenAI as the LLM layer. The default embedding pairing for both databases in this comparison. View Embeddings Docs →n8n — Agent Memory Connector

Works with both databasesWorkflow automation platform with native Pinecone nodes and HTTP-based Weaviate integration. Self-hostable on any VPS. Connects your AI agent memory pipeline without writing backend code.

Best for: Agencies and AI builders connecting databases to business workflows without a backend engineering team. View n8n →Join the Conversation

Your organization has compliance requirements, a query volume profile, and a team with specific operational bandwidth. Those three variables settle this decision more conclusively than any benchmark table.

Leave a Comment Down Below