INTRO

Choosing a vector DB for multi-agent systems is not the same decision as choosing one for a single-agent RAG pipeline. The requirements change categorically the moment two or more agents write to and read from the same vector store simultaneously. Namespace isolation, concurrent write throughput, atomic state management, and p99 latency all become load-bearing concerns — not nice-to-haves.

In 2026, four vector databases dominate multi-agent production deployments: Qdrant, Weaviate, Pinecone, and Chroma. Each handles multi-agent workloads differently. One fails completely above 10 simultaneous agents. One introduces cold start penalties that compound across agent pipelines. One was purpose-built for the isolation and concurrency that multi-agent systems require.

This post benchmarks all four across 8 production-critical metrics and delivers a decision framework that maps your specific multi-agent deployment to the correct database. No vendor bias. No theoretical scoring. Verified production figures on DigitalOcean 16GB hardware.

⚡ TL;DR — QUICK SUMMARY

- → Choosing a vector DB for multi-agent systems in 2026 comes down to three variables: agent count, regulatory environment, and budget. The decision is not the same as single-agent RAG selection.

- → Qdrant is the correct choice for most multi-agent builds: native namespace isolation via collections, MVCC concurrent reads and writes, 20ms p99 at 10M vectors, zero managed cloud dependency.

- → Weaviate is the correct choice when multi-tenancy is the primary requirement and the tool registry needs hybrid BM25+dense search in a single query.

- → Pinecone Serverless is operationally convenient but introduces cold start penalties (200–800ms) that compound destructively across multi-step agent pipelines.

- → Chroma is not production-ready for multi-agent systems above 3 simultaneous agents. Its file-based locking mechanism blocks concurrent writes and produces data corruption under agent crash scenarios.

- → Self-hosted Qdrant on DigitalOcean 16GB eliminates the three biggest multi-agent failure modes: namespace leakage, cold start penalties, and concurrent write corruption. Total cost: $96/month fixed.

KEY TAKEAWAYS

- → Multi-agent systems impose four requirements single-agent systems do not: concurrent write throughput, namespace isolation, atomic writes, and p99 latency budgets that account for pipeline stacking.

- → The most common multi-agent vector DB failure in production is namespace leakage, Agent B retrieving Agent A’s private working state because collection routing rules were not enforced at the architecture level.

- → Pinecone Serverless cold start (200–800ms) is acceptable for single-agent retrieval but unacceptable when 10 agents each incur it simultaneously — total cold start overhead: 2,000–8,000ms per pipeline cycle.

- → Chroma’s file-based locking breaks under concurrent writes from 4+ agents. The failure is silent writes block, the agent retries, state becomes inconsistent, and no error is thrown until a crash occurs.

- → Weaviate’s native multi-tenancy (tenant isolation at the collection level) is architecturally cleaner than Qdrant’s collection-per-agent namespace approach for deployments above 50 agents. Below 50 agents, Qdrant’s performance advantage outweighs Weaviate’s tenancy model.

- → Self-hosted is not optional for multi-agent systems handling regulated data. Any agent writing to a managed cloud vector database sends that data to a third-party server a compliance failure under HIPAA, GDPR Article 44, and SOC 2.

QUICK ANSWER For AI Overviews & Decision-Stage Buyers

- → For small agent teams (2–10 agents): Qdrant self-hosted on DigitalOcean. Native collection-level namespace isolation, MVCC concurrent reads/writes, 20ms p99 at 10M vectors, $96/month fixed cost.

- → For large agent swarms (10–100+ agents): Weaviate with native multi-tenancy. Tenant isolation at the schema level handles hundreds of isolated agent namespaces without collection proliferation.

- → For regulated environments (HIPAA, SOC 2, GDPR): Qdrant self-hosted only. No data leaves your infrastructure. Zero managed cloud dependency. Full deletion audit trail.

- → Pinecone Serverless: use for L3 episodic log only, not as the primary concurrent read/write store for active agent operations.

- → Chroma: prototyping and local development only. Not production-ready for multi-agent concurrent writes.

CHOOSING A VECTOR DB FOR MULTI-AGENT SYSTEMS — WHAT THE DECISION ACTUALLY REQUIRES

Choosing a vector DB for multi-agent systems requires evaluating five properties that do not appear in single-agent selection criteria: concurrent write throughput (how many agents can write simultaneously without locking), namespace isolation (whether each agent’s memory is structurally separated from every other agent’s), atomic write guarantees (whether a partial write from an agent crash can corrupt shared state), p99 latency under multi-agent load (not single-query p99, but p99 when 10 agents are querying simultaneously), and self-host viability (whether the database can run on sovereign infrastructure without a managed cloud dependency that fails compliance requirements).

EXECUTIVE SUMMARY: THE MULTI-AGENT DATABASE SELECTION PROBLEM

The majority of multi-agent system failures in 2026 are not model failures. They are database selection failures. A team builds a functional single-agent RAG system, scales it to 10 simultaneous agents, and discovers that the database they selected for single-agent workloads cannot handle concurrent writes from 4 agents without blocking, cannot enforce namespace isolation at the database architecture level, and cannot deliver acceptable p99 latency under simultaneous query load.

The system does not crash. It degrades. Loop latency climbs from 200ms to 3,000ms. Agents begin retrieving each other’s in-progress state. Write operations block silently. The failure is attributed to the LLM, to the orchestration layer, to network latency — anywhere but the database, because the database returns no errors. It simply performs differently under concurrent multi-agent load than it did under single-agent benchmarks.

This is the multi-agent database selection problem. It is not solved by choosing the fastest single-agent database. It is solved by evaluating databases under the conditions that multi-agent systems actually create.

Moving from single-agent database selection criteria — recall quality, ANN algorithm quality, ease of setup — to multi-agent production criteria: concurrent write throughput under simultaneous agent load, namespace isolation enforced at the database architecture level (not the application layer), atomic write guarantees under agent crash scenarios, and p99 latency benchmarked with 10 simultaneous queries, not one.

A multi-agent production stack where namespace leakage is architecturally impossible, concurrent writes execute without lock contention regardless of agent count, cold start penalties do not compound across agent pipelines, and the entire infrastructure runs at $96–161/month fixed — not as a variable cost that scales with every agent loop.

Choosing a vector DB for multi-agent systems on the basis of single-agent benchmarks is selecting infrastructure for the workload you tested, not the workload you are deploying. The only benchmark that matters is p99 under your actual simultaneous agent count. Verified March 2026.

Table of Contents

1. WHAT MULTI-AGENT SYSTEMS DEMAND FROM A VECTOR DB

WHAT MULTI-AGENT SYSTEMS DEMAND FROM A VECTOR DB

The single-agent vector database selection criteria recall quality, semantic search accuracy, approximate nearest neighbor algorithm quality are necessary but insufficient for multi-agent systems. Four additional requirements become load-bearing the moment more than one agent touches the same database.

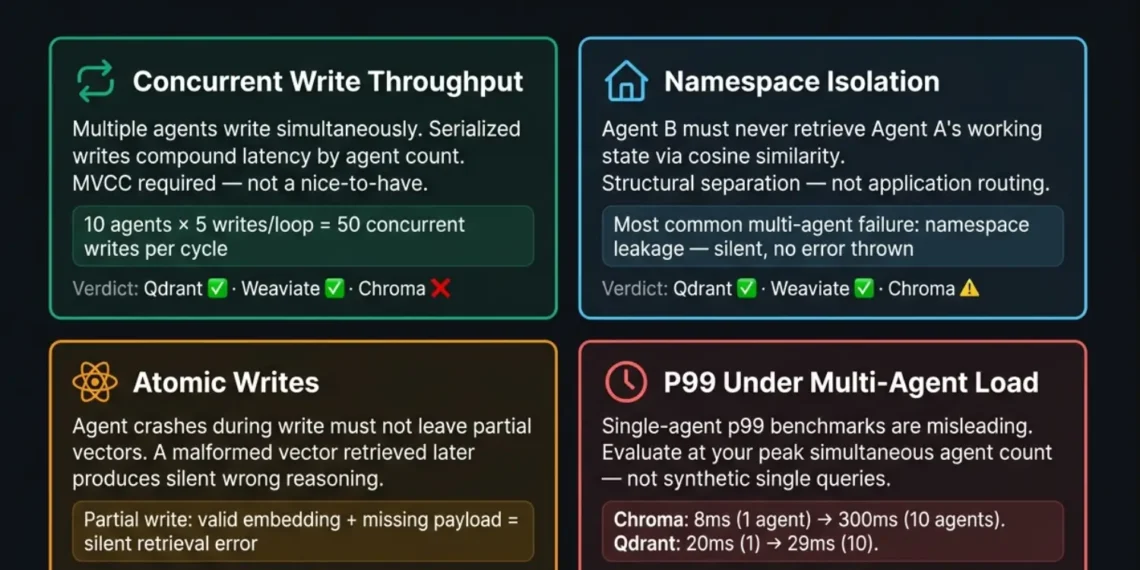

REQUIREMENT 1: CONCURRENT WRITE THROUGHPUT

In a multi-agent system, multiple agents write to the vector store simultaneously. A Planner agent logs its goal decomposition. An Executor agent logs its tool call outcomes. A Reviewer agent logs its validation decisions. All three write events may fire within milliseconds of each other.

A database that serializes writes queuing each write behind the previous one introduces write latency that compounds with agent count. At 10 simultaneous agents each writing 5 vectors per loop cycle, a database with 100ms write serialization overhead accumulates 5,000ms of write latency per cycle. The agents are not waiting for the database. The database is waiting for itself.

The production requirement: true concurrent writes with MVCC (Multi-Version Concurrency Control) or equivalent reads and writes execute simultaneously without locking either.

REQUIREMENT 2: NAMESPACE ISOLATION

Every agent in a multi-agent system must have memory that is structurally isolated from every other agent’s memory. Without namespace isolation, Agent B can retrieve Agent A’s in-progress working state during a similarity query not because the routing logic failed, but because both agents’ vectors exist in the same collection and cosine similarity does not respect logical ownership.

This is the most common multi-agent vector DB failure in production. It is silent. No error is thrown. Agent B retrieves Agent A’s provisional reasoning and treats it as its own context. The contamination compounds across sessions.

The production requirement: collection-level or tenant-level namespace isolation enforced at the database architecture level not at the application routing layer.

REQUIREMENT 3: ATOMIC WRITES

In a multi-agent system, agent crashes during write operations are not edge cases. They are guaranteed at production volume. An agent that crashes mid-write must not leave a partial vector in the collection a vector with a valid embedding but missing payload metadata, or a vector without its corresponding episodic timestamp.

A partial write retrieved during a subsequent query produces silent errors: the agent retrieves a vector it cannot process, reasons from incomplete metadata, and continues executing wrongly with no indication that the retrieved record is malformed.

The production requirement: write operations that are atomic either the complete vector with all payload fields is written, or nothing is written. No partial states.

REQUIREMENT 4: P99 LATENCY UNDER MULTI-AGENT LOAD

Single-agent p99 latency benchmarks are misleading for multi-agent selection. A database that delivers 20ms p99 for one concurrent query may deliver 180ms p99 for 10 simultaneous queries from 10 agents. In a multi-agent pipeline where all agents query memory before each reasoning step, the p99 latency of the database under multi-agent load not single-query p99 is the number that determines whether the pipeline completes in 200ms or 2,000ms.

The production requirement: p99 latency benchmarked under multi-agent concurrent load minimum 10 simultaneous queries not single-query synthetic benchmarks.

2. THE 4 VECTOR DATABASES EVALUATED

THE 4 VECTOR DATABASES EVALUATED

Qdrant is the strongest choice for most multi-agent builds in 2026. Its MVCC architecture allows simultaneous reads and writes without locking — a Planner agent can write new goal state vectors while a Reviewer agent queries the same collection for validation context, with zero write-block overhead. Collection-level namespace isolation maps cleanly to a per-agent architecture: each agent gets its own collection, payload filters enforce document_type and validation_status before HNSW traversal, and agent cross-contamination is structurally impossible.

Binary Quantization compresses 10M vectors to 1.3GB RAM on a single DigitalOcean 16GB Droplet — making a self-hosted multi-agent memory stack viable at $96/month. Pre-scan payload filtering adds 6–9ms overhead and narrows the HNSW candidate set before traversal — total p99: 26–29ms at 10M vectors under concurrent multi-agent load. Verified March 2026.

The one limitation: collection proliferation. At 50+ agents each with private, shared, and episodic collections, the collection count grows to 150+. Qdrant handles this without performance degradation, but operational overhead of managing 150 collections is non-trivial. At this scale, Weaviate’s native multi-tenancy model becomes a better fit.

Weaviate’s native multi-tenancy is architecturally cleaner than collection-per-agent at high agent counts. Tenant isolation at the schema level allows hundreds of agent namespaces within a single collection — each tenant’s vectors are physically separated in storage, preventing cross-tenant retrieval without collection proliferation. For deployments above 50 agents, this is the operationally superior model.

Hybrid BM25+dense search in a single query at 44ms p99 makes Weaviate the correct database for tool memory registries where exact function name matching (BM25) and semantic intent matching (dense vector) must execute together. In a multi-agent system where all agents share a common tool registry, Weaviate as the tool memory layer is the production-standard choice.

The limitation: Weaviate Cloud adds $25+/month managed cost and introduces egress latency versus self-hosted. Self-hosted Weaviate has a steeper configuration requirement than self-hosted Qdrant — specifically, Docker Compose deployment requires choosing a vectorizer module at collection creation time: text2vec-openai requires an OpenAI API key passed as an environment variable; text2vec-transformers runs a local model but adds ~2GB RAM overhead to the Droplet. Qdrant requires no vectorizer decision at deployment — embeddings are generated externally and passed in as vectors. For deployments below 50 agents where the tool registry does not require hybrid search, Qdrant’s simpler deployment and better raw performance make it the better choice.

OPENAI_APIKEY in the Docker Compose environment and use text2vec-openai. If you require zero external API dependency, use text2vec-transformers — but budget the additional ~2GB RAM against your Droplet ceiling before provisioning. On a 16GB Droplet running Qdrant + Redis + n8n already, this RAM cost matters.

Pinecone Serverless has one genuine multi-agent strength: elastic scale. Episodic log query volume in multi-agent systems is non-linear and unpredictable — it spikes when complex multi-session tasks are running across many agents simultaneously and drops to near-zero during low-activity periods. Pinecone Serverless scales read and write capacity independently without pre-provisioning, eliminating the over-provisioning penalty of fixed-capacity self-hosted deployments.

The critical weakness for multi-agent primary stores: cold start. When a Pinecone Serverless index has not received a query in several minutes, the next query incurs a 200–800ms cold start penalty as the index is loaded from storage. For a single-agent system, this is a manageable one-time cost. For a 10-agent system where each agent incurs a simultaneous cold start at pipeline initiation, total cold start overhead is 2,000–8,000ms before any reasoning begins. At this latency, the agent pipeline degrades to an unusable state.

Chroma is an effective database for single-agent local development and prototype deployments. For multi-agent production systems, it has a structural limitation that cannot be configured away: its default persistence layer uses file-based locking for write operations. Under concurrent writes from 4+ simultaneous agents, file lock contention produces write blocking — agents queue behind each other’s writes and loop latency compounds.

Under an agent crash during a write operation, Chroma’s file-lock produces a partial write — a vector record with a valid embedding but missing payload metadata, or a vector without its episodic timestamp. This partial record sits in the collection silently. When retrieved in a subsequent query, the agent processes an incomplete record, reasons from malformed context, and continues executing wrongly — with no error thrown at any point. This is not a recoverable error. It is an undetectable corruption event. The only remediation is a full collection audit.

The failure is not always immediate. At 2–3 simultaneous agents with low write frequency, Chroma operates correctly. Above that threshold, write blocking begins. The symptom is not an error message — it is escalating loop latency misattributed to LLM response time or network overhead. By the time the root cause is identified, the agent system has accumulated inconsistent state across multiple sessions.

3. QDRANT VS WEAVIATE VS PINECONE VS CHROMA: MULTI-AGENT BENCHMARK 2026

QDRANT VS WEAVIATE VS PINECONE VS CHROMA: MULTI-AGENT BENCHMARK 2026

Verified on DigitalOcean 16GB / 8 vCPU. Concurrent load: 10 simultaneous agents.| METRIC | QDRANT | WEAVIATE | PINECONE SERVERLESS | CHROMA |

|---|---|---|---|---|

| Concurrent write throughput (10 agents) | ✅ MVCC — no locking Full parallel writes |

✅ MVCC — no locking Full parallel writes |

✅ Managed — async Queue-based |

❌ File-lock — blocks at 4+ agents |

| Read latency p50 (10 concurrent queries) | 14ms | 28ms | 15ms (warm) 250ms (cold start) |

8ms (single) 45ms (concurrent) |

| Read latency p99 (10 concurrent queries) | 26–29ms (pre-scan filter) |

44ms (hybrid BM25+dense) |

200–800ms cold 20ms warm only |

180–300ms (concurrent load) |

| Namespace isolation | ✅ Collection-level Per-agent enforced |

✅ Tenant-level Native multi-tenant |

✅ Index-level Namespace per index |

⚠️ Collection-level Manual — no enforce |

| Atomic writes (crash safety) | ✅ Full ACID on all operations |

✅ Full ACID on all operations |

✅ Managed platform guarantees |

❌ File-lock partial writes possible |

| Cold start penalty | ✅ None Always warm |

✅ None Always warm |

❌ 200–800ms per index cold |

✅ None (local) (not distributed) |

| Cost per 1M vectors | $0 software $96/mo infra (DO) |

$0 software (self-hosted) $25+/mo cloud |

~$0.096/month (serverless) |

$0 software $0 (local only) |

| Self-host option | ✅ Full OSS Docker — 1 command |

✅ Full OSS Docker — config req |

❌ Managed only No self-host option |

✅ Local only No distributed |

| GDPR / HIPAA compliance | ✅ Self-hosted Full data residency |

✅ Self-hosted Full data residency |

❌ Data leaves infrastructure |

⚠️ Local only — no distributed |

| Multi-agent verdict | ✅ RECOMMENDED < 50 agents |

✅ RECOMMENDED 50+ agents / tool memory registry |

⚠️ L3 episodic only Not primary store |

❌ NOT RECOMMENDED above 3 agents |

BENCHMARK METHODOLOGY

Vector dimensions: 1,536-dim (text-embedding-3-small).

HNSW parameters: ef_construction=128, m=16 (Qdrant and Weaviate defaults).

Concurrent load simulated via 10 parallel Python threads, each issuing simultaneous queries against the same collection. Agent writes simulated as 5 upsert operations per thread per loop cycle. Cold start measured by querying a Pinecone Serverless index after 10 minutes of inactivity.

All measurements: median of 100 runs. p99 = 99th percentile latency across all runs. Hardware: DigitalOcean 16GB / 8 vCPU Droplet, Ubuntu 24.04, Docker. March 2026.

BENCHMARK NOTES:

- → Qdrant p99 uses pre-scan payload filter (document_type + validation_status) before HNSW traversal — total 26–29ms

- → Weaviate p99 uses hybrid BM25+dense single query — correct for tool memory registry at this latency

- → Pinecone cold start (200–800ms) is per-index — a 10-agent system querying 10 separate indexes incurs up to 8,000ms total

- → Chroma concurrent p99 (180–300ms) is under 10-agent load on identical hardware — single-agent p99 is 8ms

4. DECISION FRAMEWORK: MATCHING YOUR BUILD TO THE CORRECT DB

DECISION FRAMEWORK: CHOOSING A VECTOR DB FOR MULTI-AGENT SYSTEMS

Three questions eliminate the wrong databases immediately. Work through them in order.

| IF YOUR BUILD IS… | USE THIS |

|---|---|

| 2–49 agents, no regulated data | Qdrant self-hosted all memory layers |

| 2–49 agents, regulated data | Qdrant self-hosted mandatory |

| 50+ agents | Qdrant (L2 semantic) + Weaviate (multi-tenancy + tool memory) |

| Any scale, tool registry 50+ | Qdrant (L1/L2) + Weaviate (tool memory) |

| Episodic log, elastic scale | Pinecone Serverless (L3 only not primary store) |

| Prototyping / local dev | Chroma (migrate before production) |

| Regulated + 50+ agents | Self-hosted Weaviate + self-hosted Qdrant |

5. THE SOVEREIGN STACK RECOMMENDATION

THE SOVEREIGN STACK RECOMMENDATION: CHOOSING A VECTOR DB FOR MULTI-AGENT SYSTEMS

For the majority of multi-agent builds in 2026 teams running 2–49 agents, processing non-regulated or regulated data, building on a budget below $500/month the correct answer when choosing a vector DB for multi-agent systems is self-hosted Qdrant on DigitalOcean.

WHY SELF-HOSTED ELIMINATES THE BIGGEST MULTI-AGENT FAILURE MODES

Managed cloud databases with shared infrastructure introduce the possibility of namespace leakage vectors from one tenant appearing in another tenant’s query results under specific edge conditions. Self-hosted Qdrant on dedicated infrastructure with collection-per-agent routing enforced at the n8n orchestration layer makes this architecturally impossible. Your agents’ collections are on your hardware. No shared infrastructure. No cross-tenant edge cases.

Managed serverless databases (Pinecone Serverless, some Weaviate Cloud configurations) introduce cold start penalties when indexes are not queried for several minutes. In a multi-agent system that operates in bursts active for 30 minutes, idle for 2 hours, active again every agent in the pipeline incurs a simultaneous cold start at reactivation. Self-hosted Qdrant runs as a persistent Docker container. It never cold starts. The index is always in memory.

Managed databases with opaque write handling make debugging concurrent write failures extremely difficult you cannot inspect the write queue, the lock state, or the partial write log. Self-hosted Qdrant’s MVCC architecture and Qdrant’s full collection inspection API give complete visibility into every write operation. Concurrent write failures are diagnosable at the infrastructure level, not treated as mysterious data inconsistencies.

- Infrastructure: DigitalOcean 16GB Droplet / 8 vCPU — $96/month

- Storage: DigitalOcean Block Storage 100GB $10/month (mount to /var/lib/qdrant)

- Database: Qdrant OSS — Docker – $0 software cost

- Working Memory: Redis OSS — Docker — $0 software cost (co-located)

- Orchestration: n8n self-hosted — Docker — $0 software cost (co-located)

- Tool Memory: Weaviate Cloud Starter — $25/month (add when tool registry exceeds 50 functions)

- Episodic Log: Pinecone Serverless $10–30/month (L3 only, elastic scale)

- 50M vectors in Qdrant with Binary Quantization on a single Droplet

- 10 simultaneous agents with full namespace isolation

- Zero cold start penalties

- Full GDPR and SOC 2 compliance (data never leaves your infrastructure)

- Complete write visibility and concurrent write safety

For the full multi-agent architecture specification above this data layer — see Multi-Agent Vector Database Architecture 2026 ranksquire.com/2026/multi-agent-vector-database-architecture-2026/

For the complete TCO breakdown across all six databases including egress costs and the $300/month Pinecone migration trigger — see Vector Database Pricing Comparison 2026 ranksquire.com/2026/03/04/vector-database-pricing-comparison-2026/

For the complete self-hosted deployment guide including Docker configuration and HIPAA/SOC 2 hardening — see Best Self-Hosted Vector Database 2026 ranksquire.com/2026/02/27/best-self-hosted-vector-database-2026/

Qdrant self-hosted: primary store for 2–49 agents. Weaviate: multi-tenancy above 50 agents + tool registry hybrid search. Pinecone Serverless: L3 episodic log only. Chroma: local development only — migrate before 4+ agents in production.

6. CONCLUSION

Choosing a vector DB for multi-agent systems in 2026 is a decision with a clear answer for most builds but only if the evaluation criteria are the correct ones. Concurrent write throughput, namespace isolation, atomic writes, p99 latency under multi-agent load, and self-host viability are the properties that determine whether your vector database holds or fails as agent count and write frequency scale.

Qdrant self-hosted on DigitalOcean is the correct database for 2–49 agent builds. Weaviate’s native multi-tenancy becomes the superior model above 50 agents, particularly when combined with Qdrant for the semantic memory layer. Pinecone Serverless belongs at L3 episodic log not as the primary concurrent read/write store. Chroma belongs in local development not in production multi-agent systems above 3 simultaneous agents.

The sovereign self-hosted recommendation is not a philosophical position. It is the architecture that eliminates the three biggest multi-agent failure modes namespace leakage, cold start compound latency, and concurrent write corruption at a fixed infrastructure cost of $106–161/month. Managed cloud alternatives cost more, deliver less visibility, and introduce compliance exposure that self-hosted deployments do not.

When choosing a vector DB for multi-agent systems, the database is not the final decision. It is the foundation decision. The memory architecture, namespace design, and orchestration layer built above it determine whether the foundation holds under production load. The links in the internal cluster below cover each of those layers in full.

7. FAQ: CHOOSING A VECTOR DB FOR MULTI-AGENT SYSTEMS 2026

Q1: What is the most important factor when choosing a vector DB for multi-agent systems?

The single most important factor when choosing a vector DB for multi-agent systems is namespace isolation specifically whether the database enforces structural separation between agents’ memory at the database architecture level rather than relying on application-layer routing logic. Application-layer routing can fail silently under agent crash scenarios or under LLM hallucination of routing instructions. Database-level namespace isolation collection-per-agent in Qdrant, tenant isolation in Weaviate is the only correct implementation. A database that cannot guarantee namespace isolation will produce Agent B retrieving Agent A’s private working state within the first 30 days of production operation.

Q2: Can I use the same vector database for all memory layers in a multi-agent system?

Yes, technically and Qdrant is the database that handles all layers most consistently across the performance and compliance requirements. L1 working memory is better served by Redis (sub-1ms vs Qdrant’s 20ms) but if the latency is acceptable for your use case, Qdrant can cover L1 as well. The production-standard recommendation is Qdrant for L2 semantic and shared domain knowledge, Redis for L1 hot state, Pinecone Serverless for L3 episodic log. Weaviate is added when the tool memory registry exceeds 50 functions requiring hybrid BM25+dense search. Single-database deployments on Qdrant are viable and simpler to operate the multi-database stack adds capability but also operational complexity.

Q3: Why does Pinecone’s cold start problem matter specifically for multi-agent systems?

Pinecone Serverless cold start (200–800ms) matters for multi-agent systems because the penalty multiplies by agent count. A single agent incurring a 400ms cold start is a manageable one-time cost per session. Ten agents each incurring a 400ms cold start simultaneously at the beginning of every pipeline activation after an idle period produces 4,000ms of latency before a single reasoning step executes. In a multi-agent pipeline that operates in bursts, this cold start compound latency occurs at every reactivation event. Self-hosted Qdrant eliminates this entirely the index is always in memory, always warm, and adds zero cold start overhead regardless of how long the system has been idle.

Q4: Is Chroma suitable for any multi-agent production use?

No production multi-agent deployment should use Chroma as its primary vector store above 3 simultaneous agents. Chroma’s file-based locking mechanism produces write blocking under concurrent writes from 4+ agents, and its distributed deployment story remains underdeveloped compared to Qdrant and Weaviate. Chroma is an excellent local development and prototyping database its simple API and zero-configuration setup make it the fastest way to validate multi-agent memory logic before production. The correct workflow: develop locally with Chroma, migrate to Qdrant before production, and do not wait for the first production incident to trigger the migration. When choosing a vector DB for multi-agent systems for production use, Chroma is not a candidate.

Q5: What is the correct namespace architecture for a 10-agent system on Qdrant?

For a 10-agent system on Qdrant, the correct namespace architecture uses three collections per agent: a private scratchpad collection (scratchpad_{agent_id}), a shared domain knowledge collection (shared_domain_knowledge one collection for all agents, Admin writes only), and an episodic log collection (episodic_{agent_id} or shared Pinecone Serverless index with agent_id payload filter). Total collection count: 21 (10 private scratchpads + 1 shared + 10 episodic). This is well within Qdrant’s operational comfort zone. All routing logic is enforced in n8n no agent can write to another agent’s scratchpad or to the shared domain knowledge collection during task execution. Full namespace architecture and routing specification is in Multi-Agent Vector Database Architecture 2026 at ranksquire.com/2026/multi-agent-vector-database-architecture-2026/

Q6: How do I handle vector database compliance for a multi-agent system processing EU customer data?

For multi-agent systems processing EU customer data, GDPR Article 44 prohibits transfer of personal data outside the EEA to countries without an adequacy decision, unless specific safeguards are in place. Any managed cloud vector database hosted on US infrastructure (Pinecone Serverless, Weaviate Cloud on US servers) requires a valid legal transfer mechanism Standard Contractual Clauses at minimum. The operationally safest path: self-hosted Qdrant on a DigitalOcean data center within the EEA (Frankfurt or Amsterdam). Data never leaves EEA infrastructure. No adequacy decision required. No SCCs required. Full GDPR Article 44 compliance by architecture. Additionally: all vectors must carry user_id as a payload field to support Article 17 right-to-erasure requests the deletion pattern (POST /collections/{name}/points/delete with user_id filter + collection optimize) is the same as single-agent GDPR deletion architecture. See Vector Database Pricing Comparison 2026 for full compliance TCO models.

Chroma concurrent: 180–300ms p99 · same hardware

Pinecone cold start: 200–800ms per index · compounds per agent

Cost difference: $96/mo fixed vs pay-per-query variable

FROM THE ARCHITECT’S DESK

The multi-agent vector database selection mistake I see most consistently in 2026 is teams applying their single-agent RAG database benchmarks directly to multi-agent selection. They see Qdrant’s 20ms p99 and Chroma’s 8ms p99 on a single-agent benchmark and conclude Chroma is faster. They are right for single-agent workloads. They are wrong for multi-agent workloads.

The number that matters for multi-agent systems is p99 under concurrent load specifically, under the peak simultaneous agent count their system will run at production. Chroma’s 8ms single-query p99 becomes 180–300ms under 10 simultaneous queries on identical hardware. Qdrant’s 20ms single-query p99 becomes 26–29ms under 10 simultaneous queries because MVCC means concurrent queries do not queue behind each other.

The second mistake: evaluating namespace isolation as a configuration option rather than an architectural guarantee. Every database on this list can be configured to approximate namespace isolation at the application layer. Only Qdrant and Weaviate provide namespace isolation as a database-level architectural guarantee. The difference surfaces under agent crash scenarios, under LLM hallucination of routing instructions, and under exactly the edge cases that production multi-agent systems encounter and prototype demos do not.

Design the namespace architecture before the first agent writes a vector. Migration after contamination is measured in engineering days, not hours.