The Noise-to-Signal Crisis: Choosing the Best Vector Database for RAG (2026)

💼 Executive Summary

The Problem: Generic vector search is insufficient for enterprise RAG. Without precise metadata filtering and hybrid ranking, your LLM receives semantically similar but factually irrelevant noise a failure mode called Context Poisoning.

The Shift: Moving from pure vector similarity to Metadata-Hardened Hybrid Retrieval.

The Outcome: High-fidelity retrieval that respects tenant boundaries, legal compliance, and temporal relevance without spiking query latency.

The Definition: The best vector database for RAG performs high-concurrency hybrid search combining dense and sparse vectors and enforces strict, low-latency metadata filtering at the database layer not after retrieval.

The Honest Counter-Argument: Some engineers argue a well-tuned reranker makes the vector database choice irrelevant. This is incorrect at enterprise scale. A Cross-Encoder reranker improves precision on results the database returns. If the database retrieves the wrong tenant’s documents or outdated policy chunks before the reranker sees them, the reranker is correcting a retrieval failure that should never have occurred. The database is the first line of precision. The reranker is the second. Neither replaces the other.

Table of Contents

RAG Requirements: The Architect’s Checklist

In 2026, the best vector database for RAG must satisfy four non-negotiable requirements before any other evaluation criterion applies.

Metadata Filtering Depth: Handling high-cardinality metadata millions of unique user_id, document_date, or tenant_id tags without performance degradation. Databases that handle 10,000 unique filter values well often collapse at 1,000,000. Test at production cardinality, not demo scale.

Chunking Strategy Compatibility: Support for Parent-Document Retrieval storing small chunks for search precision while retrieving larger parent contexts for the LLM. A database forcing you to choose between search precision and context completeness is not production-grade.

Hybrid Search Dominance: Native BM25 sparse keyword search combined with HNSW dense vector search. Pure vector search fails on acronyms, regulatory clause references, and specific named entities. A Financial Firm querying Section 12(b) disclosure requirements needs keyword precision. A Real Estate operation querying “comparable sales Q3 2025 above $2M” needs both.

Multi-Tenant Isolation: Logical or physical data separation ensuring Tenant A’s agent never traverses Tenant B’s embedding space a compliance requirement for B2B SaaS, a legal requirement for Financial Firms, and a trust requirement for any Real Estate platform serving multiple brokerages on shared infrastructure.

The Filtering Wall: Why Most RAG Systems Fail at Scale

The Filtering Wall is the point at which a RAG system that performed well in development begins producing incorrect or legally problematic results in production.

It occurs when metadata filters are applied after retrieval. Post-filtering retrieves the top-k most semantically similar vectors from the entire index then discards non-matching results. Two failures follow: retrieval slots are wasted on documents that will be discarded, and when filters are strict one tenant out of fifty post-filtering consistently returns fewer than k valid results, starving the LLM of context.

Pre-filtering constrains the search space before similarity computation begins. The database searches only within metadata-matching partitions. Latency drops. Result quality rises. The LLM receives a full, valid context set every time.

The best vector database for RAG pre-filters. This is the architectural dividing line between a prototype and a production system.

Comparative Analysis: RAG-Specific Workflows

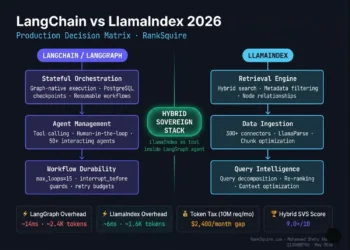

Three databases lead for RAG-specific deployments in 2026:

| Feature | Pinecone Serverless | Weaviate | Qdrant |

|---|---|---|---|

| Hybrid Search | Strong — RRF-based | Native BM25 + Vector | Sparse + Dense |

| Filtering Mode | Pre-filter on Metadata | Complex filter expressions | Rich JSON payload |

| Best RAG Use Case | Zero-ops managed SaaS | Multi-tenant isolation | Self-hosted performance |

| Tenant Isolation | Namespaces — logical | Multi-tenancy — physical | Collections — physical |

| Latency Profile | Serverless cold start risk | Consistent at scale | Sub-10ms self-hosted |

| Ops Overhead | Zero | Moderate | Low |

Decision Mini-Table: Choose by Primary Bottleneck:

| Your Primary Constraint | Recommended Database | Reason |

|---|---|---|

| Compliance — physical tenant isolation required | Weaviate | Physical index separation per tenant |

| Performance — sub-10ms self-hosted latency | Qdrant | Payload filtering, no per-query cost |

| Ops — zero infrastructure management | Pinecone | Serverless, fully managed |

| Budget — high query volume, fixed cost | Qdrant self-hosted | Zero per-query vendor cost |

| Multi-modal data — image + text RAG | Weaviate | Native multi-modal support |

Pinecone leads when the team needs complete focus on the application layer with zero infrastructure management. RRF-based hybrid search handles most enterprise RAG workloads without ops overhead. Trade-off is cold start latency on serverless pods and higher cost per query at extreme volume.

Weaviate leads when multi-tenancy is the primary architectural requirement. Native multi-tenancy physically isolates tenant data at the index level not just via namespace filters. For B2B SaaS serving multiple law firms, Financial Firms, or Real Estate brokerages on shared infrastructure, physical isolation is the only defensible architecture.

Qdrant leads when self-hosted performance is the primary requirement. Payload-based filtering handles high-cardinality metadata with minimal latency impact. For performance-critical pipelines on dedicated hardware, Qdrant delivers sub-10ms retrieval at production scale with zero per-query vendor cost.

For the complete 2026 ranking across all six major vector databases, including deployment cost comparisons and benchmark data → Best vector database for AI agents

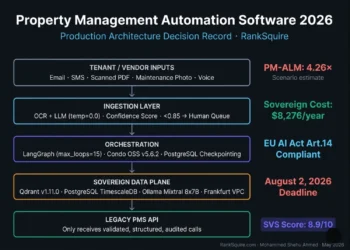

RAG Architecture: The Sovereign Flow

A production RAG system is a distributed pipeline. The best vector database for RAG acts as the precision switch in this flow:

[User Query]

↓

Pre-Filter (tenant_id · document_date · security_context)

↓

Hybrid Search (BM25 Keyword + HNSW Vector — parallel)

↓

RRF Merge (Reciprocal Rank Fusion)

↓

Cross-Encoder Reranker

↓

LLM Context WindowStage 1: Async Ingestion: Documents are cleaned, chunked using recursive or semantic strategies, and embedded. Parent-child chunk relationships are preserved in metadata so the database can return parent chunks for LLM context while searching child chunks for precision.

Stage 2: Metadata Enrichment: Each chunk is tagged verified: true, source: internal, tenant_id: firm_a, document_date: 2026-01, recency_score: 0.95. These tags power every pre-filter that follows.

Stage 3: Hybrid Query Construction: The user’s query splits simultaneously into BM25 keyword search and dense vector search using the same embedding model used at ingestion. Both searches run in parallel.

Stage 4: Pre-Filtering: Before similarity computation, the database discards all chunks outside the user’s security context, tenant boundary, or temporal requirements. Only metadata-valid chunks enter similarity search.

Stage 5: Retrieval and Reranking: Top-k pre-filtered results from keyword and vector searches merge via Reciprocal Rank Fusion. The merged set passes through a Cross-Encoder reranker for final precision scoring.

Stage 6: LLM Context Assembly: Reranked chunks assemble into the context window with parent documents retrieved for narrative completeness. The LLM receives clean, tenant-validated, temporally-relevant context.

This is the architecture separating a RAG system that occasionally hallucinates from one that reliably does not.

Scenario Simulation: The Multi-Tenant Compliance Leak

The Scenario: A B2B Legal AI platform serves 50 law firms on shared infrastructure using RAG-based research.

The Failure: Flat index, post-retrieval filtering. A Firm A query about “non-compete clauses” traverses the entire index including Firm B’s private client documents. Post-filtering removes them before the LLM sees them but the retrieval slot was wasted. At 50 tenants and 10 million documents, post-filtering returns fewer than k results on restrictive queries 30 percent of the time.

The Fix: Weaviate native multi-tenancy. Firm A’s data is physically isolated. The query never enters Firm B’s memory space. Pre-filtering reduces the search space from 10 million vectors to approximately 200,000 before similarity computation begins.

The Outcome: 100 percent data isolation. 40 percent improvement in retrieval speed. Consistent k results on every query. SOC 2 audit passed. Compliance flag closed.

The same pattern applies to Financial Firms isolating client portfolios and Real Estate platforms isolating brokerage transaction data.

Recommended Stack Combos for Production RAG

The Managed Powerhouse: OpenAI text-embedding-3-large + Pinecone Serverless. Zero ops, strong RRF hybrid search, serverless scaling. Evaluate cost at projected query volume before committing per-query pricing scales with execution rate.

The Sovereign Speedster: BGE-M3 open source embedding + Qdrant self-hosted on dedicated hardware. Sub-10ms retrieval, zero per-query cost, full data sovereignty. BGE-M3 produces both dense and sparse vectors natively enabling true hybrid search without a separate BM25 pipeline. The correct stack when infrastructure cost and data residency are non-negotiable.

The Semantic Knowledge Graph: Cohere Embed v3 + Weaviate. Multi-tenant physical isolation, native hybrid search, multilingual embedding. The correct stack for international B2B SaaS serving clients across multiple language jurisdictions with strict compliance requirements.

Use-Case Verdicts

Multi-Tenant SaaS RAG: Weaviate. Physical index isolation per tenant. No defensible alternative for B2B Legal AI, Financial compliance platforms, or Real Estate SaaS serving multiple brokerages.

Keyword-Heavy RAG: part numbers, clause references, acronyms: Qdrant. Payload-based filtering and sparse plus dense hybrid search outperform pure semantic approaches on industrial, legal, and financial data.

Billion-document RAG, zero DevOps: Pinecone Serverless. Best vector database for RAG when infrastructure management is a non-starter.

High-volume RAG, fixed cost required: Qdrant self-hosted. Zero per-query cost at any execution volume.

For the full 2026 ranking and decision framework across all six major vector databases → Best vector database for AI agents

Connecting Your RAG Stack

The vector database is the memory layer. It requires the right orchestration and infrastructure to operate at production scale.

For sovereign self-hosted deployment, the Enterprise AI Infrastructure 2026: The Sovereign Stack documents how Qdrant, n8n, PostgreSQL via pgvector, and Coolify operate as an integrated platform the complete infrastructure context for every stack combo recommended above.

For teams migrating from Chroma to a production-grade alternative, the Chroma Database Alternative 2026 guide documents the migration path with filtering performance benchmarks for Qdrant, Weaviate, and Pinecone.

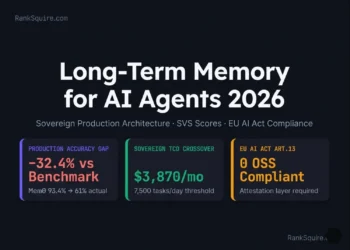

From the Architect’s Desk

I audited a medical RAG system failing because the LLM kept citing outdated clinical journals. The team’s instinct was to switch LLM providers. The second instinct was to improve the prompt. Neither addressed the actual problem.

The issue was a missing temporal pre-filter. Every query searched the full document index including journals from 2019 through 2021 superseded by 2024 guidelines. The LLM cited them confidently.

By implementing a strict metadata recency filter in Qdrant excluding any chunk with a publication date older than 24 months hallucinations dropped to zero on temporal queries within the first week. The model did not change. The prompt did not change. The retrieval architecture changed.

The model was not the problem. The architecture was.

Frequently Asked Questions: Best Vector Database for RAG

What is the difference between pre-filtering and post-filtering in RAG?

Pre-filtering constrains the search space before similarity computation the database only searches metadata-matching vectors. Post-filtering searches the entire index and discards non-matching results afterward. Pre-filtering is faster, returns consistent k results, and eliminates tenant data traversal risk. Post-filtering wastes retrieval slots and returns fewer than k results when filters are restrictive. Every production RAG system should pre-filter at the database layer.

Does chunk size affect vector database performance in RAG?

Directly and indirectly. Smaller chunks increase total vector count raising infrastructure cost and latency at scale. Larger chunks dilute semantic signals making precise retrieval harder. The production solution is Parent-Document Retrieval store small chunks for search precision, store parent references in metadata, retrieve parent context for LLM assembly. This preserves both precision and narrative completeness without choosing between them.

Can I use a SQL database with pgvector for RAG?

Yes. pgvector on PostgreSQL is viable for early-stage RAG on datasets under approximately one million vectors with moderate concurrency. For high-cardinality metadata filtering at millions of unique tags, or for hybrid search combining BM25 and dense vector search, a purpose-built vector store outperforms pgvector significantly in both latency and retrieval precision. Test at production cardinality before committing.

How does Reciprocal Rank Fusion improve RAG retrieval?

RRF merges ranked result lists from keyword and vector search without requiring score normalization. BM25 and cosine similarity scores exist on incompatible scales direct merging produces skewed results. RRF uses rank positions instead a result ranked 3rd in keyword search and 7th in vector search receives a combined score reflecting consistent relevance across both modalities. This makes hybrid retrieval significantly more reliable than either search mode alone.

What is Context Poisoning and how does the best vector database for RAG prevent it?

Context Poisoning occurs when RAG retrieves semantically similar but factually incorrect or outdated chunks and feeds them to the LLM as context. Prevention operates at the database layer through temporal pre-filtering excluding chunks older than a defined recency threshold, and through tenant isolation ensuring queries only search authorized documents. A Cross-Encoder reranker further reduces Context Poisoning by scoring retrieved chunks for factual relevance, not just semantic similarity.

How does multi-tenant isolation differ between Weaviate and Pinecone?

Pinecone uses logical namespace isolation tenant data shares the underlying index, partitioned by namespace tags. The query technically traverses a shared index before namespace filtering applies. Weaviate’s native multi-tenancy creates physically separate indexes per tenant each tenant’s vectors occupy distinct memory that other tenants’ queries never enter. Physical isolation is the stronger compliance architecture for platforms with SOC 2, GDPR, or legal data residency requirements.

What is the minimum viable production RAG stack in 2026?

A chunking pipeline with parent-document retrieval, an embedding model producing both dense and sparse vectors for hybrid search, a vector database with physical multi-tenant isolation and pre-filtering on high-cardinality metadata, a Cross-Encoder reranker, and an orchestration layer connecting ingestion, retrieval, and LLM assembly. For managed deployment: OpenAI embeddings plus Pinecone. For sovereign self-hosted: BGE-M3 plus Qdrant on dedicated hardware.

How do I choose between Qdrant and Weaviate for enterprise RAG?

Two questions decide it. First: is physical multi-tenant data isolation a compliance requirement? If yes, Weaviate. Second: is sub-10ms retrieval latency on self-hosted infrastructure the primary performance requirement? If yes, Qdrant. If both matter equally, evaluate Weaviate hosted cloud against Qdrant self-hosted at your production query volume cost and latency profiles diverge significantly above ten million vectors and one thousand concurrent queries per second.

Which database is running your RAG stack right now — or are you still evaluating? Drop your stack in the comments. Real architects share what they are actually running in production.

This architecture is not theoretical. A Real Estate brokerage running RAG on transaction history retrieves comparable property data in under 3 seconds — without a human touching a search interface. A B2B Agency using multi-tenant RAG on client accounts stops re-briefing their AI before every call — the agent already knows the account, the history, and the last three deliverables. A Financial Firm with compliance documents in Weaviate retrieves the exact regulatory clause in milliseconds — not minutes. The infrastructure is the same. The application changes your business category.

The RAG Production Stack

Three tools. One pipeline. The exact infrastructure this article is built around.

Pinecone — Managed RAG Memory

Fully managed, serverless, zero maintenance. RRF-based hybrid search built in. The default choice for teams who need production-ready RAG without touching infrastructure. Connects natively to n8n and Make.com in minutes.

Best for: Teams who need zero-ops RAG at enterprise scale with sub-50ms retrieval. View Tool →Qdrant — Self-Hosted Performance

Built in Rust. The fastest open-source vector database for production RAG in 2026. Advanced JSON payload filtering, sub-10ms retrieval at self-hosted scale, and zero per-query cost. Deploy via Docker on any Hetzner server.

Best for: Performance-critical RAG where data sovereignty and fixed infrastructure cost are non-negotiable. View Tool →Weaviate — Multi-Tenant Compliance RAG

The only database with native physical multi-tenancy for RAG. Each tenant’s index is physically isolated — not just logically filtered. BM25 plus vector hybrid search built in. The correct architecture for B2B SaaS, Legal AI, and Financial compliance platforms.

Best for: Multi-tenant RAG where physical data isolation is a compliance or legal requirement. View Tool →💡 Architect’s Note: Start with Pinecone and OpenAI text-embedding-3-small for the fastest path to production RAG. Migrate to Qdrant self-hosted when your monthly Pinecone bill exceeds $150 or when data residency becomes a hard requirement. Move to Weaviate when physical tenant isolation is the architectural constraint — not before.

Your RAG Pipeline.

Built. Deployed. Sovereign.

You now have the architecture. The filtering logic. The stack combos. The compliance framework. The question is not whether this is the right system to build. The question is whether you have the engineering bandwidth to build it correctly — or whether one misconfigured pre-filter is going to send the wrong tenant’s documents to the wrong LLM call in production.

We added a 24-month recency pre-filter in Qdrant.

Hallucinations dropped to zero. No model change. No prompt change.

Architecture change only.

I build production RAG pipelines for Real Estate operations, B2B Agencies, and Financial Firms — pre-filtered, multi-tenant isolated, hybrid-search enabled, and handed over with full documentation. Not a template. A sovereign system built around your data, your compliance requirements, and your retrieval workload.

BUILD MY RAG PIPELINE → 2 RAG architecture engagements per month. If you are reading this, one may still be open.