⚙️ Quick Answer (For AI Overviews & Skimmers)

An n8n recursive workflow is a pattern where a workflow calls itself or a child sub-workflow repeatedly until a defined condition is met.

Unlike the Loop Over Items node which holds all iterations in memory simultaneously, recursive execution isolates each cycle into its own execution context, releases memory between runs, and scales to millions of records without crashing your server.

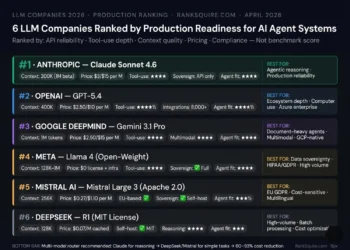

In 2026, two patterns dominate enterprise AI infrastructure: the Pagination Loop for infinite API data fetching, and the Agentic Retry Loop for AI self-correction. Both are covered in full below with production-ready code.

💼 Executive Summary: Why Linear Loops Break and Recursion Fixes It

The n8n recursive workflow solves the most common scaling failure in enterprise automation: the memory accumulation crash.

When most developers first encounter looping in n8n, they reach for the Split in Batches node or the Loop Over Items node. For small arrays ten records, fifty records, a hundred records this works perfectly. The execution finishes, the memory clears, and the workflow completes cleanly.

At 10,000 records the problem begins. At 50,000 records it becomes a server crash.

The reason is architectural. Modern AI development has moved beyond linear workflows of trigger and action. We are now in the era of Agentic AI, which requires recursive planning the ability for an agent to look at a goal and decide on a multi-step path. The standard Loop Over Items node was designed for linear processing. It holds every iteration’s execution data in memory simultaneously including the full node history of every step. In a loop processing 50,000 CRM records, that execution history grows exponentially until n8n either runs out of RAM or the execution times out.

The n8n recursive workflow eliminates this by treating each cycle as a separate, complete execution. Process one batch. Finish the execution. Release the memory. Trigger the next batch. The server never accumulates the full load of the entire dataset simultaneously only the current batch, processed cleanly, then released.

This is not a workaround. This is the only architecturally correct pattern for high-volume enterprise AI infrastructure. The path from a successful prototype to a failing production system is usually paved with over-optimism and the first wall most engineering teams hit is error handling and idempotency. Recursive workflows address both.

This guide covers three essential n8n recursive workflow patterns for enterprise AI infrastructure: the Pagination Loop, the Agentic Retry Loop, and the Human-in-the-Loop pattern introduced natively in n8n’s January 2026 release.

Introduction

Most n8n developers hit the same wall. The workflow runs perfectly on 500 records. They deploy it to production against 50,000 records and wake up to a crashed execution, a silent failure log, and no idea where the process stopped. The culprit is almost always the same a linear loop accumulating execution history until the server runs out of memory. The n8n recursive workflow exists specifically to solve this. This guide covers the three production patterns that power enterprise-grade automation in 2026 with real code, real failure scenarios, and the exact architecture used in live deployments.

Table of Contents

Why Linear Loops Fail at Enterprise Scale

Before building an n8n recursive workflow, you need to understand exactly why the standard looping approach breaks and at what threshold it breaks.

In a standard n8n workflow, data flows left to right through nodes. The Loop Over Items node iterates through an array and passes each item to the next node in sequence. This is intuitive and works well for small datasets. The problem is that n8n stores the full execution data for every node at every iteration in the execution history log. Each iteration does not clear the previous one it accumulates on top of it.

The memory consumption pattern looks like this: at 100 iterations, execution history is manageable. At 1,000 iterations, performance begins to degrade. At 10,000 iterations on a Hetzner AX102 with 128GB RAM, the execution history log has grown large enough to cause visible slowdowns. At 50,000 iterations, the execution either runs out of allocated memory or hits n8n’s internal execution timeout and the entire workflow fails without completing, often without clear error logging to tell you exactly where it stopped.

This memory leak is not a bug. It is the natural behavior of a tool designed for moderate-volume automation being applied to enterprise-scale data processing. The n8n recursive workflow exists to solve this by design, not by workaround.

Linear loops hoard memory. Recursive workflows release it.

By using the Execute Workflow node to call a child workflow for each batch, you process one batch, complete that execution context entirely, release all associated memory, and trigger the next batch fresh. The parent workflow never accumulates the children’s execution data. Each child is born, does its work, and finishes cleanly.

Pattern 1: The Pagination Loop (Infinite API Fetching)

The Pagination Loop is the most common use case for an n8n recursive workflow in enterprise AI infrastructure: fetching all records from an API that limits responses to a fixed page size.

HubSpot returns 100 contacts per API call. Stripe returns 100 transactions. Salesforce returns 200 records. If you have 50,000 contacts, 100,000 transactions, or 500,000 records to process and you need all of them the Pagination Loop is the only scalable n8n recursive workflow pattern that will complete without crashing.

The Logic:

Workflow A: the Parent triggers the process and calls Workflow B with an empty cursor, signaling that pagination starts from the beginning.

Workflow B: the Child, Recursive executes the following steps on every call:

Step 1: Fetch a page of data using the current cursor value passed from the parent or the previous recursive call.

Step 2: Process that page enrich, transform, write to database, embed in Qdrant, whatever the pipeline requires.

Step 3: Check whether the API returned a next-page cursor in the response.

Step 4: If a next-page cursor exists call Workflow B again, passing the new cursor as the input JSON.

Step 5: If no next-page cursor exists end the execution. All pages have been processed.

The If Node Expression, paste into your If node condition field:

javascript

{{ $json.body.paging.next.after ? true : false }}This expression checks whether the pagination token exists in the API response. If it does, the condition evaluates to true and the recursive call fires. If it does not, the condition evaluates to false and the workflow ends cleanly.

The Execute Workflow Node Configuration:

Source: Database Workflow: Select the same Workflow B Mode: Wait for workflow to finish set to ON for reliability in compliance-sensitive environments, OFF for maximum throughput speed in non-critical pipelines Pass Data: JSON pass the after cursor value from the current page’s response

The State JSON passed between recursive calls:

json

{

"cursor": "xyz_123",

"total_processed": 500,

"errors_encountered": 2

}The Code Node that updates state before each recursive call:

javascript

return {

json: {

cursor: $json.body.next_page,

total_processed: $json.total_processed + $json.body.results.length,

errors_encountered: $json.errors_encountered

}

}This Code Node runs immediately before the Execute Workflow node on every cycle. It updates the running totals how many records have been processed, how many errors have occurred and passes the updated state to the next recursive call. Because each n8n recursive workflow execution is isolated and stateless, this JSON object is the only mechanism for maintaining continuity across cycles. The accumulator pattern passing all state in the input JSON is the standard approach for stateful recursion in a stateless execution engine.

Real-world application: Real Estate lead processing: A Real Estate brokerage using this pattern fetches all 80,000 historical lead records from HubSpot, embeds each one into a self-hosted Qdrant vector database, and completes the full pipeline without a single timeout or memory crash. Each batch of 100 records processes cleanly, releases memory, and the next batch begins. Total runtime: under four hours on a Hetzner AX102. Total cost: the $65 monthly server fee.

Pattern 2: The Agentic Retry Loop (AI Self-Correction)

The Agentic Retry Loop is the n8n recursive workflow pattern that separates brittle automation from resilient enterprise AI infrastructure. It solves a fundamental problem with LLM-powered workflows: AI models produce incorrect, malformed, or invalid output on a percentage of calls and a production system must handle this without human intervention.

The recursive writing-editing loop uses multi-agent collaboration where a Writing Agent generates content, an Editing Agent reviews it and determines whether the work is complete, and the loop continues until the editor is satisfied allowing for high-quality iterative AI-assisted output with minimal human input. 0 This same pattern applied to structured data validation is the Agentic Retry Loop.

The Scenario: Your AI agent is asked to extract structured information from a document and return it as a valid JSON object. On approximately 5 to 15% of calls depending on the model, the document complexity, and the prompt quality the LLM returns malformed JSON, truncated output, or a response that fails your validation schema. Without a retry mechanism, this failure either crashes the workflow silently or requires human intervention on every failed record.

The Recursive Logic:

Input JSON the state object passed into the first and every subsequent recursive call:

json

{

"prompt": "Extract the contract value and expiry date as JSON...",

"attempts": 0,

"previous_error": null

}Step 1: AI Node sends the prompt to GPT-4o or your local Ollama model and receives the response.

Step 2: Validation Node a Code Node that attempts to parse the response as JSON and validates it against your expected schema.

Step 3a: Pass: if the JSON is valid and the schema matches, output the result and end the execution.

Step 3b: Fail: if invalid, check the attempts counter in the input state.

Step 4a: If attempts is less than 3 call the same n8n recursive workflow again, passing the updated state:

json

{

"prompt": "The previous attempt failed with this error: [error message]. Please try again and ensure the output is valid JSON matching this schema...",

"attempts": 1,

"previous_error": "Unexpected token at position 142"

}Step 4b: If attempts is 3 or greater end the recursive loop, log the failure to your monitoring system, and route the record to human review via Slack or your ticketing system.

Why this makes enterprise AI infrastructure resilient:

Without this n8n recursive workflow, a 10% LLM failure rate in a pipeline processing 10,000 records produces 1,000 failed records requiring manual intervention. With a three-attempt retry loop that resolves 85% of failures on the second or third attempt, that 1,000 manual interventions becomes approximately 150. The self-correction loop reduces human review burden by over 85% on AI extraction pipelines a material operational saving at enterprise scale.

Pattern 3: The Human-in-the-Loop (HITL) Recursive Pattern

As of January 2026, n8n natively supports Human-in-the-Loop interactions in agentic workflows requiring explicit human approval before an AI Agent executes specific tools. A gated tool cannot execute unless a human explicitly approves the action, giving deterministic control over high-impact operations like deleting records, writing to production systems, or sending high-impact emails. 1

For enterprise AI infrastructure with compliance obligations Financial Firms under DORA, agencies under GDPR, healthcare organizations under HIPAA the HITL pattern is not optional. It is the governance layer that makes autonomous agents legally deployable.

The HITL Recursive Workflow:

The n8n recursive workflow runs through its standard processing loop until it reaches a high-risk action a large financial transaction, a contract amendment, a bulk data deletion. At that decision point, instead of executing the action automatically, the workflow pauses, sends the proposed action to a designated reviewer via Slack, email, or the n8n Chat interface, and waits.

The reviewer sees the context what the agent processed, what it is proposing to do, and why and approves or rejects via a button click. If approved, the workflow executes the action and continues the recursive loop with the next batch. If rejected, the workflow logs the rejection, routes the record to a human queue, and continues with the remaining records.

Production systems require confidence scoring and human-in-the-loop thresholds. If the extraction confidence falls below a pre-defined threshold, the system automatically pauses, caches the current state, and routes a ticket to a human reviewer. IBM

This is compliance by architecture not compliance by promise.

Advanced Passing State Between Recursive Loops

The most technically demanding aspect of an n8n recursive workflow is maintaining state a running count of processed records, accumulated errors, or progress through a multi-stage pipeline across executions that are deliberately isolated from each other.

Because each recursive execution context is completely independent, you cannot use global variables or shared memory between cycles. The only reliable mechanism is the Accumulator Pattern: embedding all state into the JSON input object that gets passed to each recursive call.

What to include in every recursive state object:

A cursor or offset indicating where the next execution should begin the pagination token, the last processed record ID, or the current page number.

A running total of processed records so you can report progress and detect when the loop is complete.

An error counter so you can implement intelligent backoff or human escalation when errors accumulate beyond a threshold.

A timestamp of the last successful execution so monitoring systems can detect stalled loops that have stopped recursing without completing.

The critical rule: every piece of information you need in the next execution must be explicitly included in the JSON you pass to the Execute Workflow node. Anything not in that JSON is gone when the current execution closes.

Error Handling in Recursive n8n Workflows

If a recError handling in an n8n recursive workflow requires specific architecture that differs from linear workflow error handling. The primary risk is the infinite error loop a recursive call that fails, retries immediately, fails again, and recurses indefinitely until the server runs out of execution slots or the queue fills to capacity.

The Try-Catch Pattern for Recursive Workflows:

Wrap your core processing logic in an Error Trigger node. When the execution fails API 500 response, network timeout, schema validation failure the Error Trigger catches it before it crashes the workflow.

On catching an error: log the error details to Slack or your monitoring dashboard with the current state JSON so you know exactly where in the recursive chain the failure occurred.

Do not recurse immediately on error. Immediate retry on a 500 error will produce another 500 error. The downstream API or service is unavailable.

Use a Wait node configured to pause for 60 seconds minimum or implement exponential backoff where the wait duration doubles on each failed attempt: 60 seconds, 120 seconds, 240 seconds.

After the wait period, recurse passing the same cursor position so the retry begins from exactly where the failure occurred, not from the beginning.

Exponential backoff in the state JSON:

json

{

"cursor": "xyz_123",

"retry_count": 2,

"next_wait_seconds": 240,

"total_processed": 4847

}This exponential backoff pattern is the standard in architect-grade enterprise AI infrastructure. It distinguishes a production system that recovers gracefully from a prototype system that requires human intervention every time a downstream API burps.

Real-World Application: Recursive Workflows by Vertical

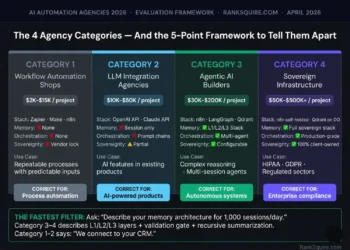

Real Estate: The Infinite Lead Enrichment Pipeline

A Real Estate brokerage using an n8n recursive workflow to enrich leads from HubSpot processes 80,000 contacts in batches of 100. Each batch fetches the contact, queries a local Qdrant instance for prior interaction history, sends the combined context to GPT-4o for qualification scoring, updates the CRM, and triggers the next batch. The Agentic Retry Loop catches the approximately 8% of LLM calls that return malformed scoring JSON and retries them automatically. Zero manual intervention on the enrichment pipeline. Total infrastructure cost: $65 per month.

B2B Agencies: The Client Data Migration Loop

A B2B Agency migrating 200,000 historical client records from a legacy CRM into a self-hosted Qdrant vector database uses a Pagination Loop n8n recursive workflow running on Coolify. The loop fetches 500 records per batch, embeds each one using OpenAI text-embedding-3-small, writes the vectors to Qdrant, and recurses with the updated cursor. The migration runs overnight across 400 recursive executions. No timeout. No memory crash. No Zapier task bill for 200,000 embedding operations. Total migration cost: server time and OpenAI embedding tokens.

Financial Firms: The Compliant Document Processing Loop

A Financial Firm processing 50,000 compliance documents through an AI extraction pipeline uses the HITL n8n recursive workflow pattern. The loop processes each document, extracts structured data, validates the output schema, and submits high-confidence extractions automatically. Low-confidence extractions those below a 94% confidence threshold pause the loop, route the document to a compliance reviewer via Slack, and resume after approval. The complete audit trail of every extraction, every confidence score, every human approval, and every rejection is logged to PostgreSQL and retained for the regulatory minimum of seven years.

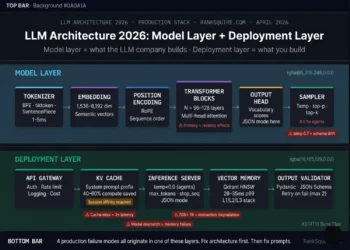

Linking the Stack: Where Recursive Workflows Fit in Sovereign Infrastructure

An n8n recursive workflow running on shared SaaS infrastructure will eventually hit platform limits execution timeouts, memory caps, concurrent execution restrictions. The patterns in this guide only reach their full potential on sovereign self-hosted infrastructure.

The complete enterprise AI infrastructure stack that supports production-grade recursive n8n workflows: Hetzner AX102 for dedicated compute with no noisy neighbors, Coolify for deployment orchestration, n8n in Queue Mode with Redis for horizontal worker scaling, PostgreSQL for persistent state storage when the recursive JSON accumulator is not sufficient, and Qdrant for vector memory in AI agent pipelines.

For a detailed implementation guide on deploying this stack, see: Self-Hosting n8n on Coolify: The Complete 2026 Guide.

Conclusion: Recursion Is What Separates Architects from Script Writers

The n8n recursive workflow is the clearest technical dividing line between automation that works in a demo and automation that survives in production.

Linear loops are intuitive. They are how humans naturally think about repetition. They are also how enterprise automation crashes silently at 2 AM when the HubSpot sync hits record number 10,001 and the execution history fills the allocated RAM.

Recursive workflows require more deliberate design. You must think about state before you think about logic. You must design your accumulator before you write your first node. You must plan your error handling before you plan your happy path. This is exactly the kind of thinking that separates script writers from Automation Architects.

Start with a loop that counts to ten. Verify the state passes correctly between executions. Verify the error handler catches a simulated failure. Verify the backoff waits the correct duration. Then apply the pattern to your millions of records.

The infrastructure does not limit you. The Hetzner AX102 running Coolify and n8n in Queue Mode will process whatever you throw at it. The only limit is the quality of your recursive architecture.

Build it correctly once. It runs forever.

Frequently Asked Questions: n8n Recursive Workflow

What is an n8n recursive workflow and when do I need one?

An n8n recursive workflow is a pattern where a workflow calls itself or a child sub-workflow repeatedly until a defined exit condition is met. You need it when processing datasets too large for the Loop Over Items node typically above 10,000 records when building AI agents that need to retry failed operations autonomously, or when paginating through APIs that return data in fixed page sizes with cursor-based navigation.

Why does the Loop Over Items node crash at large scale?

The Loop Over Items node holds every iteration’s full execution data in memory simultaneously. As the dataset grows, the execution history log grows exponentially consuming RAM until the server runs out of memory or n8n’s internal execution timeout is reached. An n8n recursive workflow avoids this by completing each iteration as a separate isolated execution, releasing memory between cycles.

What is the Zapier Cliff equivalent for n8n recursive workflows?

The equivalent threshold is approximately 10,000 records for the Loop Over Items node on a standard n8n deployment. Above this, recursive execution via the Execute Workflow node is the correct architectural choice. On a Hetzner AX102 with n8n in Queue Mode, a properly built n8n recursive workflow has no practical upper limit on dataset size.

How do I pass state between recursive executions in n8n?

Because each execution context is completely isolated, state must be passed explicitly in the JSON input object sent to each recursive call. This Accumulator Pattern includes the cursor position, running totals, error counters, and any other information needed by the next execution. Nothing outside the input JSON survives between recursive calls.

What is the maximum recursion depth in n8n?

n8n does not enforce a hard recursion depth limit on self-hosted deployments. The practical limit is your server’s available memory and the Queue Mode worker capacity configured on your Coolify instance. With proper error handling and exponential backoff, a well-architected n8n recursive workflow will run indefinitely without hitting a depth limit.

How do I prevent infinite loops in an n8n recursive workflow?

Three safeguards prevent infinite loops. First, always include an explicit exit condition checked before each recursive call a null pagination cursor, a completed attempts counter, or a total records processed counter matching the expected total. Second, include a maximum iterations counter in your state JSON and enforce a hard stop when it is reached. Third, implement error-triggered backoff never recurse immediately on failure, always wait and log before retrying.

Does the n8n recursive workflow work with Queue Mode?

Yes, and Queue Mode significantly improves recursive workflow performance. In Queue Mode, each recursive execution is placed in the Redis queue and picked up by the next available worker. This means multiple recursive chains can run in parallel without blocking each other critical for enterprise AI infrastructure processing multiple concurrent pipelines simultaneously.

How does the HITL pattern work inside an n8n recursive workflow?

As of January 2026, n8n natively supports human-in-the-loop controls at the tool level within agentic workflows. In a recursive pattern, the HITL checkpoint is placed at the decision node before any high-risk action. When triggered, the recursive execution pauses, routes the proposed action to a designated reviewer, and resumes only after explicit approval. The current state is preserved in the JSON accumulator so the loop resumes from exactly the correct position after the approval is received.

From the Architect’s Desk

The first time I built a linear loop in n8n that needed to process 75,000 records, I was confident. The workflow looked clean. The logic was correct. The test on 500 records ran perfectly.

I deployed it at midnight and went to sleep.

At 3 AM, the execution died at record 11,847. No error notification. No alert. The workflow simply stopped. The server was still running. n8n was still running. But the execution history had grown large enough to consume all available memory and the process had been killed silently by the operating system.

I rebuilt it as an n8n recursive workflow the following weekend. Each batch of 200 records processed cleanly, released memory, and called the next batch. The same 75,000 records completed in under six hours with zero intervention.

That is the difference between a loop and a recursive workflow. One accumulates until it fails. The other breathes processes, releases, repeats until the work is done.

Build the recursive version first. You will never go back.

The Recursive Performance Stack

Running an n8n recursive workflow on shared infrastructure is like running a Formula 1 engine in a rental car. The engine will win. The car will not survive. Every tool below is what we run in production.

Every recursive execution spawns a new isolated execution context. On a shared VPS with 2 virtual cores and 4GB RAM, three concurrent recursive loops will exhaust available memory in under ten minutes. On a Hetzner AX102 — AMD Ryzen 9, 128GB ECC RAM, NVMe storage — you can run fifty concurrent recursive chains processing 100,000 records each without a single timeout or memory pressure event. The dedicated Ryzen cores mean each recursive execution gets real CPU cycles, not a timeslice on a hypervisor shared with 200 other tenants. You cannot build enterprise-grade n8n recursive workflows on shared compute. This is the compute foundation everything else runs on.

💰 $65/month flat — no per-execution fees, no memory upgrade tiers, no throttling ⚠️ Running recursion on a Basic VPS is the number one cause of production crashes we see in audits View Server →Coolify is how you deploy n8n, Redis, and PostgreSQL on your Hetzner AX102 without a DevOps team. One-click container deployments. Automatic SSL. Real-time resource monitoring that shows you exactly how much RAM and CPU each recursive execution chain is consuming. When a recursive workflow starts accumulating memory unexpectedly — a sign that your exit condition is not firing correctly — Coolify’s resource monitor catches it before it crashes the server. You see the problem in the dashboard before you see it in a failed execution log at 3 AM. This is operational visibility that no SaaS automation platform gives you.

💰 Open source — zero license cost, runs on the Hetzner server you already pay for View Tool →Standard n8n runs all executions in a single process. For a handful of workflows this is fine. For concurrent recursive chains processing millions of records, it creates a bottleneck — one long-running recursive loop blocks the execution queue for everything else. Queue Mode separates the webhook receiver from the execution workers using Redis as the message broker. Each recursive call is placed in the queue and picked up by the next available worker. You add workers as load increases. Fifty recursive chains run in parallel without any one blocking another. This is the architecture that makes the patterns in this article actually work at enterprise scale.

💰 Free self-hosted — zero per-execution cost regardless of recursion depth or volume View Tool →Redis is the backbone of n8n Queue Mode. When a recursive workflow fires its next call, that call does not execute immediately in the same process — it is placed as a message in the Redis queue. A worker picks it up, executes it, and returns. This architecture means your Hetzner server never tries to hold the entire recursive chain in memory simultaneously. It processes one batch, queues the next, clears memory, picks up the queued call, and continues. Redis runs as a Docker container on the same Coolify instance — zero additional infrastructure cost. If n8n crashes mid-recursion, the queued calls survive in Redis and resume when n8n restarts. This is crash-resistant recursive architecture.

💰 Zero additional cost — Docker container on existing Hetzner server ⚠️ Without Redis in Queue Mode, concurrent recursive workflows will fight for the same execution thread View Tool →The Accumulator Pattern — passing state in the JSON input between recursive calls — works perfectly under normal conditions. Under abnormal conditions — server crash, power interruption, OOM kill during a spike — the in-flight JSON is gone. If you were at cursor position 47,832 of 80,000 records when the crash happened, you start over from zero. PostgreSQL solves this by persisting the cursor externally after every successful batch. Before the recursive call fires, a Code Node writes the current cursor, total processed, and timestamp to a PostgreSQL table. If the server crashes, the next execution reads the last persisted cursor and resumes from exactly where it stopped. For any recursive workflow processing more than 10,000 records in production, external cursor persistence is not optional.

💰 Self-hosted via Coolify — zero additional infrastructure cost on existing Hetzner server View PostgreSQL → View Supabase (Managed) →An n8n recursive workflow that processes 80,000 records in production is business-critical infrastructure. Treating it like a Zapier Zap — no version history, no staging environment, no rollback capability — is how you deploy a broken exit condition to production and wake up to an infinite loop that has consumed 47 hours of server time and sent 80,000 duplicate API calls. Every recursive workflow JSON goes into GitHub. Every change goes through a pull request. Every deployment to production happens from a tagged release. When something breaks — and at enterprise scale something eventually breaks — you roll back to the last working commit in sixty seconds. This is engineering discipline applied to automation infrastructure.

💰 Free for private repositories on standard plans View Tool →The Architect’s Blueprint Pack

The three recursive workflow JSON templates we use in production — pre-built, pre-tested, ready to import into your n8n instance. Do not start from a blank canvas when the correct architecture already exists.

Pre-configured recursive workflow JSON for cursor-based API pagination — ready for HubSpot, Stripe, Airtable, and Salesforce. Includes the If node expression, the Execute Workflow node configuration, the state accumulator Code Node, and the PostgreSQL cursor persistence write. Import, point at your API, and run. No blank canvas. No architecture guesswork.

The Agentic Retry Loop pre-built with the validation Code Node, the attempts counter in the state JSON, the error injection into the retry prompt, the three-attempt maximum, and the human escalation Slack notification at threshold. The template that turns a brittle LLM extraction pipeline into a self-correcting production system. Reduces manual intervention by over 85% on first deployment.

Production-ready JavaScript code snippets for the state accumulator pattern, exponential backoff wait calculation, cursor persistence to PostgreSQL, and error logging to Slack. Copy into your n8n Code nodes. Every snippet is commented with exactly what it does, why it is there, and what breaks if you remove it. The code library that prevents the 3 AM crash.

Is Your Workflow

Crashing at Scale?

You built the loop. You tested it on 500 records. You deployed it.

Then at 3 AM it stopped at record 11,847 with no error, no alert,

and no way to know how much it completed before the server killed the process.

This is not a logic problem. This is an infrastructure problem.

And it is fixable — if you know exactly where to look.

We audit and rebuild failing recursive workflow stacks for Real Estate operations processing high-volume lead pipelines, B2B Agencies running CRM data migrations and enrichment loops, and Financial Firms processing compliance documents through AI extraction pipelines with HITL governance. We find the break. We fix the architecture. We hand it back running.

- Full recursive workflow audit — every loop mapped, every failure point identified

- Infrastructure diagnosis — server tier, Queue Mode configuration, Redis setup verified

- Exit condition audit — every If node checked for infinite loop vulnerability

- Error handling review — backoff logic, Slack alerting, PostgreSQL cursor persistence

- Rebuilt and tested — running clean on Hetzner AX102 with full handover documentation