AI AUTOMATION AGENCIES 2026: THE 5-POINT EVALUATION FRAMEWORK

THE COMPLETE EVALUATION FRAMEWORK

TL;DR — QUICK SUMMARY

KEY TAKEAWAYS

QUICK ANSWER: WHAT ARE AI AUTOMATION AGENCIES?

The 5-point framework to choose correctly:

AI AUTOMATION AGENCIES — DEFINED

EXECUTIVE SUMMARY: THE AI AUTOMATION AGENCY PROBLEM

The result: engagements that deliver workflow templates at consulting rates, systems that collapse under concurrent load, infrastructure that the buyer does not own, and dependency on the agency’s managed platform with increasing annual fee escalation.

From evaluating agencies by proposal language to evaluating them by technical stack, sovereignty posture, and memory architecture. These require specific questions and specific answers.

An engagement that builds systems you own, on infrastructure you control, with documentation that allows your internal team to maintain the system after the agency exits.

Table of Contents

1. The 4 Categories of AI Automation Agencies

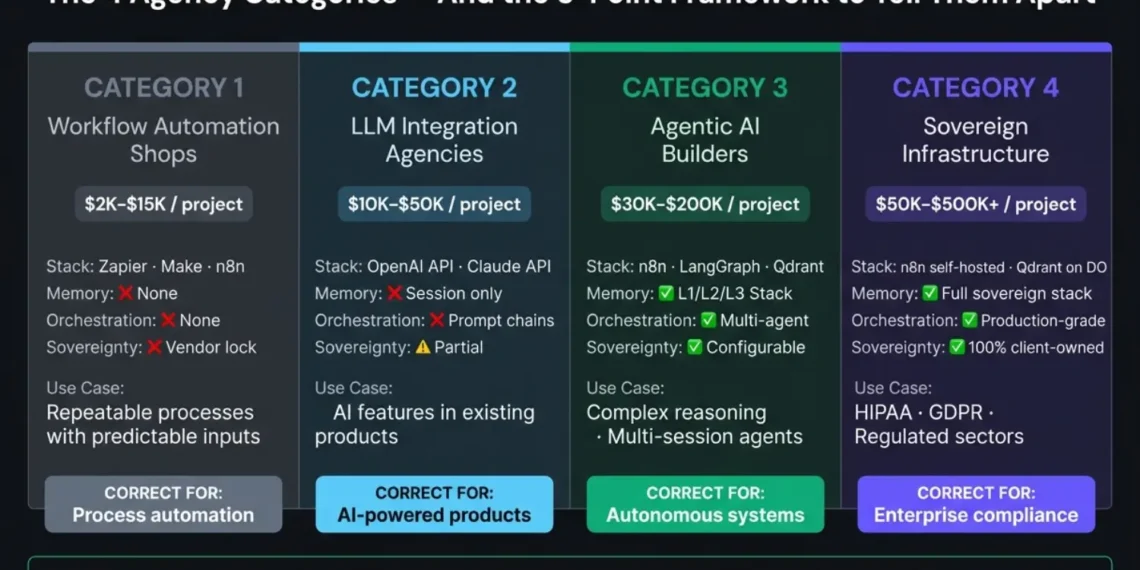

THE AGENCY TAXONOMY: 4 CATEGORIES OF AUTOMATION

2. The 5-Point Evaluation Framework

THE 5-POINT AGENCY EVALUATION FRAMEWORK

Run every agency through all five points. A failure on any single point is not a negotiation it is a signal to move to the next candidate.

Technical Stack Depth

The red flag answer describes tools, not architecture.

Sovereignty Posture

This is dependency with a dashboard on top.

Pricing Model Transparency

• Per-seat licensing for the tools they deploy.

Proof of Production

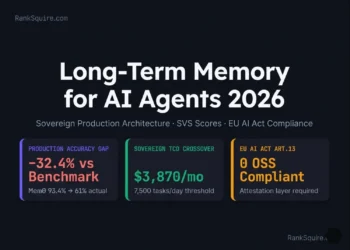

Memory and State Architecture

They are building stateless tools, not intelligent agents.

3. The 4 Red Flags That Predict Failed Engagements

4 RED FLAGS IN AI AUTOMATION ENGAGEMENTS

These four signals appear in the majority of AI automation engagements that end in scope creep, cost overruns, or systems that require constant manual intervention to function.

If an agency cannot provide: a reference system that has been running for 90+ days at production scale, monitoring dashboards showing real load, and at least one documented failure with resolution — then their portfolio is a collection of demos, not deployed systems.

Calculate: if their per-seat fee at your current team size exceeds $300/month, compare this against the cost of n8n self-hosted on DigitalOcean ($96/month fixed, zero per-seat fees, complete ownership). n8n vs Zapier Enterprise Cost Analysis ranksquire.com/2026/02/13/n8n-vs-zapier-enterprise-cost-analysis/

- State persistence between sessions

- Contradicting inputs from different sources

- Error recovery without human intervention

- Memory growth management over thousands of sessions

4. The Questions to Ask Before Signing

DUE DILIGENCE: THE 30-MINUTE COMPETENCE TEST

5. Recommended Tools — What Real Agencies Use

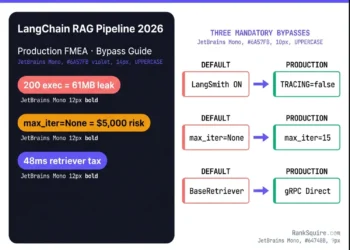

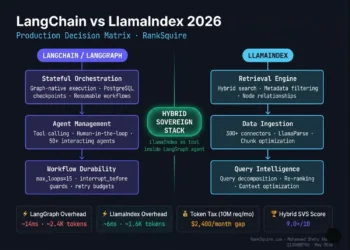

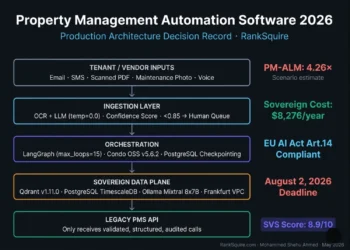

2026 PRODUCTION TOOL STACK STANDARDS

The tool stack is the fastest technical signal. Real agentic AI agencies in 2026 have converged on a specific set of tools because they have been tested at production scale and support sovereignty.

GPT-4o (OpenAI): Strong for multimodal tasks. Higher cost at scale than Claude.

Self-hosted (Ollama, vLLM): For sovereign deployments. Llama 3, Mistral, and Mixtral are the 2026 candidates.

AWS / GCP / Azure: For enterprises requiring HIPAA, FedRAMP, or SOC 2 Type II certifications.

• Make.com + Claude = workflow automation (Category 1–2)

• Retool + GPT-4o = internal tool builder (not agentic AI)

• HubSpot AI + Zapier = CRM automation (not AI agents)

None of these are wrong choices for the right problems. They are wrong choices presented as agentic AI. The distinction is the orchestration, memory, and sovereignty architecture — not the LLM brand.

5. Build vs Hire: The Decision Threshold

HIRE VS. BUILD: THE DECISION FRAMEWORK

- The automation requires multi-agent orchestration with more than 3 concurrent agent types

- The system requires persistent memory and learning across thousands of sessions

- Your internal team has no experience with n8n, LangGraph, or vector database implementation

- The timeline requires production delivery in under 90 days for a system of significant complexity

- Compliance requirements (HIPAA, GDPR, SOC 2) need to be built into the architecture from day one

- The automation is fewer than 10 workflow steps with predictable inputs and outputs

- Your engineering team has Python and API experience and 2–4 weeks of dedicated capacity

- The system does not require agent memory, reasoning loops, or adaptive behavior

- Budget for the agency engagement would exceed the internal build cost by more than 3×

The Cost Calculation:

| Variable | Estimated Production Cost |

|---|---|

| Agency Engagement (Category 3) | $50K–$150K |

| Internal Engineering Time | 3–6 months × 1–2 engineers |

| Infrastructure (Sovereign Stack) | $96–$300/month |

| LLM API (Production Load) | $200–$2,000/month |

| Total Year 1 Internal | $150K–$400K (Fully Loaded) |

For most mid-size organizations: hiring a Category 3–4 agency for the initial build and then handing over to an internal team is cheaper than a full internal build for complex agentic AI systems. For simple workflow automation: build internally using n8n on DigitalOcean.

6. How to Structure the Engagement

THE SOVEREIGN ENGAGEMENT STRUCTURE

The engagement structure protects you regardless of which agency you hire. These three clauses belong in every AI automation agency contract.

Without this clause: the agency retains control of infrastructure components as leverage for future retainer negotiations.

Approved by client CTO. Payment on document delivery and approval.

Core loops functional and verified by client engineering team.

Production deployment passing 2× expected peak concurrency.

30-day period complete, documentation delivered, team trained.

The engagement is complete only when:

- Your internal team can operate the system without agency involvement for 30 consecutive days

- All architecture documentation is delivered in a format your engineers can maintain

- All failure modes encountered during deployment are documented with resolution procedures

- A runbook exists for the 5 most likely system failures at production load

7. Conclusion

SUMMARY: THE 2026 AGENCY GAP

AI AUTOMATION SERIES · RANKSQUIRE 2026

AI Automation Agencies: How to Choose the Right One

The 5-point evaluation framework for CTOs. Red flags and contract clauses that protect your stack.

Best AI Automation Tool 2026: Ranked

n8n vs Zapier vs Make vs LangGraph — ranked by AI agent depth and sovereignty.

Read Guide → 💸 Cost Analysisn8n vs Zapier Cost Analysis 2026

The exact cost at scale. Why Zapier costs 15× more than self-hosted n8n.

Read Guide → ⭐ Pillar PostAgentic AI Architecture: The Complete Stack

Orchestration, memory, and tool-use loops from first principles.

Read Guide →8. FAQ: AI Automation Agencies 2026

What do AI automation agencies actually do?

AI automation agencies in 2026 design, build, and

deploy automated systems using AI tools, LLMs, and

agent orchestration frameworks. The spectrum is wide:

from connecting SaaS tools with Zapier and adding

an OpenAI API call, to building full multi-agent

systems with persistent vector memory, sovereign

self-hosted infrastructure, and adaptive reasoning

loops.

The term “AI automation agency” covers all

of these categories which is why the 5-point

evaluation framework matters. What a specific agency

actually builds is determined by their technical

stack, sovereignty posture, and ability to describe

their memory architecture. Not by their proposal deck.

How much do AI automation agencies charge?

AI automation agency pricing in 2026 ranges from

$2,000 per project for simple workflow automation

to $500,000+ for enterprise agentic AI deployments

on sovereign infrastructure. Category 1 workflow

shops: $2,000–$15,000. Category 2 LLM integration:

$10,000–$50,000. Category 3 agentic AI builders:

$30,000–$200,000.

Category 4 sovereign infrastructure

agencies: $50,000–$500,000+. The pricing model matters

as much as the total cost: project-based milestone

pricing protects the buyer. Retainer-first and

per-seat licensing models protect the agency.

What is the difference between an AI automation

agency and a workflow automation agency?

The distinction is architectural, not cosmetic.

A workflow automation agency connects existing

tools using trigger-action sequences Zapier,

Make.com, and n8n without agent orchestration.

These systems execute fixed processes with

predictable inputs.

An AI automation agency

builds systems that reason, decide, remember,

and adapt using LLM reasoning loops, multi-agent

orchestration, and persistent vector memory. The

output of a workflow automation agency is a

process. The output of a real AI automation

agency is an agent that improves over time.

How do I know if an AI automation agency is real?

Ask one question: “Describe your memory architecture

for a system that processes 1,000 sessions per day.”

A real agentic AI agency will describe L1 working

memory (Redis), L2 semantic vector memory (Qdrant),

L3 episodic log, validation gates, and recursive

summarization.

A workflow automation agency will

describe storing conversation history in a database

or connecting to your CRM. The answer takes under

2 minutes to give. If the agency cannot give it,

they are not building what they are selling.

Should I hire an AI automation agency or build internally?

Hire an agency when the system requires multi-agent

orchestration, persistent memory across thousands

of sessions, or compliance-grade sovereign infrastructure

and your internal team lacks this specific experience.

Build internally when the automation is fewer than

10 workflow steps with predictable inputs, your

engineering team has Python and API experience,

and 2–4 weeks of dedicated capacity is available.

For complex agentic AI systems, the agency build

plus internal handover is typically faster and

cheaper in Year 1 than a full internal build because the agency brings production-tested

architecture that would take an internal team

3–6 months to develop from scratch.

What should an AI automation agency contract include?

Every AI automation agency contract should contain

three non-negotiable clauses: a sovereignty clause

(all infrastructure and configurations are client

property, transferred before final payment), a

milestone-based payment structure (tied to verified

deliverables, not agency timelines), and a 90-day

handover protocol (system documentation, internal

team training, and 30 consecutive days of

independent client operation before the engagement

is considered complete).

Without these three

clauses, the agency has no contractual incentive

to produce a system your team can maintain and

extend independently.

FROM THE ARCHITECT’S DESK

CLOSING THOUGHT: ARCHITECTS VS. RESELLERS

Ask the question. Listen to the answer. The response in the first 30 seconds tells you everything about whether you are talking to an architect or a reseller.

AFFILIATE DISCLOSURE

This post contains affiliate links. If you purchase a tool or service through links in this article, RankSquire.com may earn a commission at no additional cost to you. We only reference tools evaluated in production architectures.